OpenAI’s Sora 2 is setting a new standard in AI-generated video, offering developers, engineers, and creators the ability to turn simple text prompts into hyper-realistic, physics-accurate videos—complete with synchronized dialogue and immersive soundscapes. This isn’t just a leap for filmmakers; it’s a shift in how backend teams, API developers, and technical leads might prototype, test, and collaborate on media-rich applications.

In this article, we’ll break down Sora 2’s technical features, its social Sora App, and the impact for developer teams looking to leverage AI for next-generation media tools. We’ll also explore how integrated platforms like Apidog can streamline your workflow when working with APIs that power these advanced AI systems.

What Is Sora 2? A Technical Overview

OpenAI Sora 2 is a multimodal AI model designed to generate highly realistic video and audio sequences from a text prompt. Unlike earlier models that often produced warped visuals or ignored basic physics, Sora 2 delivers:

- Physics-Based Simulations: Accurate modeling of motion, object interaction, and failure states (e.g., a gymnast missing a landing).

- Audio-Visual Sync: Synchronized dialogue, ambient sounds, and effects generated alongside the video.

- Diverse Visual Styles: From photorealistic to anime-inspired, Sora 2 adapts to varied creative needs.

This realism is a result of extensive pre-training on large-scale, high-quality video and audio datasets, followed by targeted fine-tuning for audio synchronization and physics modeling.

Key Features for Technical Teams

1. Physics Realism and Failure Modeling

Sora 2 doesn’t just create perfect scenes—it models real-world unpredictability. For developers, this means:

- More authentic sports and action sequences

- Simulated edge cases for testing applications (e.g., object collisions, missed attempts)

- Grounded outputs for use in training data or visual QA

2. Real-World Cameos and Asset Injection

One standout feature: you can insert real people, animals, or objects into AI-generated scenes. By uploading a short video and audio sample, Sora 2 accurately reproduces likeness and voice, enabling:

- Easy prototyping of user-personalized media applications

- Dynamic demos for client presentations

- Testing identity-based features in social or collaborative tools

3. Versatile Output Styles

From cinematic realism to stylized animation, Sora 2’s flexibility is valuable for:

- Rapid prototyping across genres

- Creating diverse datasets for machine learning

- Exploring user experience and UI designs with varied aesthetics

OpenAI highlights this versatility:

“Sora 2 can render a range of styles—realistic, cinematic, and even anime—with synchronized sound.”

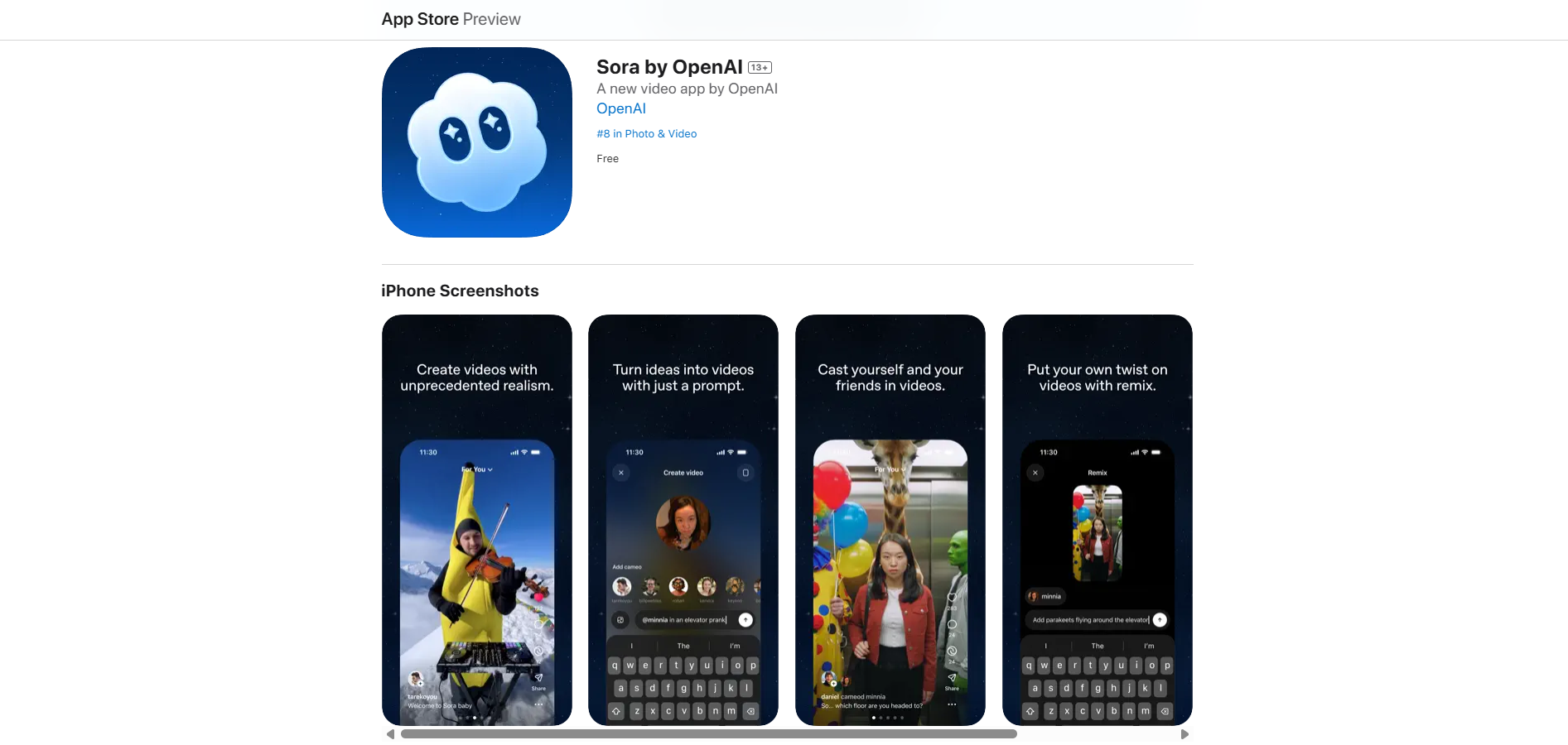

The Sora App: Social Platform for AI Video Creation

OpenAI’s Sora App, currently invite-only on iOS, takes Sora 2’s capabilities social. The app lets users:

- Generate and remix videos in a collaborative environment

- Explore a feed designed for inspiration, not endless scrolling

- Seamlessly cameo themselves or others—appearing as any character, in any setting

Developer Implications

- Enables rapid user testing of AI video features

- Facilitates team-based ideation and feedback loops

- Offers a model for building responsible, creativity-focused social platforms

Responsible AI: Safety, Moderation, and Wellbeing

OpenAI’s rollout of Sora 2 and the Sora App puts responsibility at the forefront. This is critical for teams integrating generative AI:

- Algorithmic Design: The app prioritizes creative exploration, not passive consumption.

- Safeguards: Daily limits for teens, stricter cameo permissions, parental controls, and proactive wellbeing checks.

- Moderation: Combined human and automated systems to manage misuse, bullying, and likeness rights.

For developers, this offers a blueprint for integrating robust safety features—whether building internal tools or public-facing products.

Technical Insight & Future Roadmap

Under the hood, Sora 2 leverages advanced neural scaling with large video datasets to approach realistic world simulation. This opens new opportunities:

- Advanced Editing: Expect future features like pro-level controls and a Sora 2 API, offering programmatic access for integration and automation.

- Gradual Rollout: The app and model will expand geographically as safety and community guidelines mature.

- Toward World Simulators: Sora 2 is a step toward general-purpose AI agents that can understand and interact with the physical world—potentially informing robotics, simulation, and intelligent automation.

If you’re building, testing, or scaling APIs for media-rich, AI-driven platforms, robust documentation and team collaboration are essential. Tools like Apidog generate beautiful API documentation, streamline teamwork for maximum productivity, and can replace Postman at a much more affordable price — helping your team move quickly as AI capabilities evolve.

Conclusion

OpenAI’s Sora 2 is more than just a video generator—it’s a new platform for simulation, storytelling, and collaborative creativity. For developer and API-centric teams, Sora 2 and the Sora App point to a near future where AI-powered media creation is highly accessible, responsible, and integrated into modern workflows. As generative video tools advance, leveraging efficient API development platforms like Apidog will be crucial for staying ahead.