Tokenization is the process of exchanging sensitive data for non-sensitive placeholders called tokens. These tokens retain the format or length of the original data but hold no exploitable value on their own. In the context of API security, tokenization serves as a formidable defense mechanism. When a user submits payment details, medical records, or personal information through an application programming interface, the system seamlessly replaces this critical data with a digital token before actual storage or further processing occurs.

How exactly does tokenization work to secure digital environments? The standard workflow generally involves four main, highly controlled steps:

- Data Capture: Sensitive information enters the system via a secure API request from a user or application.

- Token Generation: A secure token generator creates a random string of characters—the token—to substitute the actual, sensitive data. This generated token shares no relation to the original input.

- Secure Storage: The original data is sent directly to a highly secure, isolated database known widely as a token vault. This vault maps the non-sensitive token back to the real data, and nothing else.

- Data Replacement: The system completely removes the sensitive data from internal servers and databases, utilizing only the newly generated token for all subsequent operations.

Tokens have virtually no value to cybercriminals since they cannot be deciphered or mathematically reversed without explicit, controlled access to the secure token vault. This makes tokenization security an absolute necessity and an essential practice for organizations handling payment processing, healthcare records, or sensitive financial assets. Furthermore, adopting tokenization best practices ensures that internal networks remain out of scope for strict compliance regulations, such as PCI DSS and GDPR. By leveraging tokenization in API security, businesses effectively maintain seamless daily operations without ever exposing valuable information.

Tokenization vs Encryption: Which Offers Better API Security?

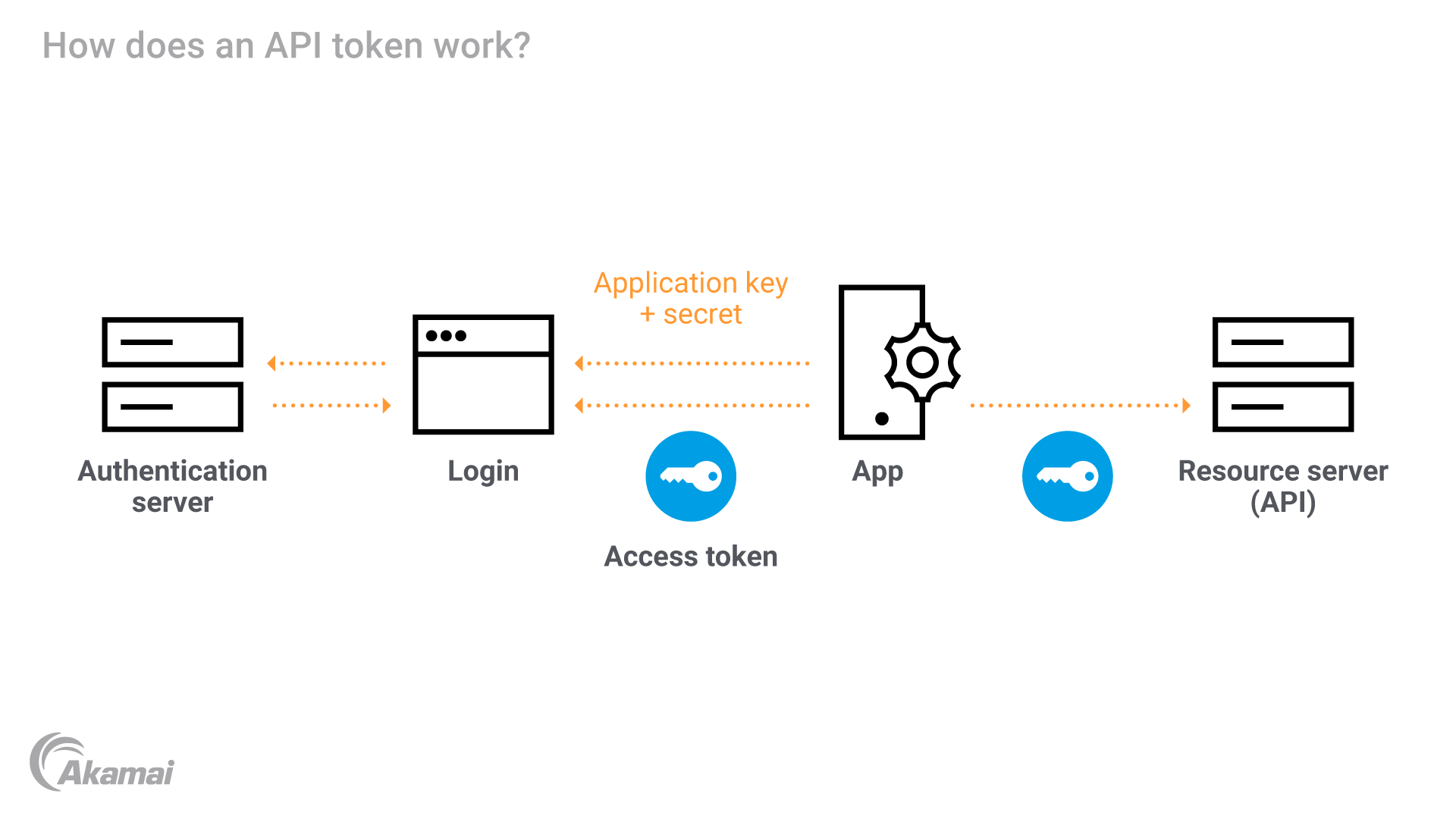

When discussing data protection, developers and corporate leaders often confuse tokenization with encryption. While both powerful methods aim to protect sensitive information, their core mechanisms differ significantly. Grasping the exact differences in tokenization vs encryption remains vital for building a robust, impenetrable security architecture.

Encryption involves transforming the original plaintext data into an unreadable format using complex mathematical algorithms. This process heavily requires a cryptographic key. Anyone possessing the correct decryption key can reverse the process and read the original data. If a hacker manages to steal the cryptographic key, the entire database becomes vulnerable to exploitation. Encryption works excellently for protecting data during transit, but it leaves underlying vulnerabilities if key management fails.

Tokenization, on the other hand, actively replaces sensitive data with a randomly generated token that has absolutely no mathematical relationship to the original input data. You cannot physically or algorithmically decrypt a token because there is no algorithm or key involved in its creation. The only way to retrieve the original data is to access the protected token vault where the mapping is stored.

Here is a side-by-side comparison of the two:

| Feature | Tokenization | Encryption |

|---|---|---|

| Reversibility | Irreversible without access to the designated token vault | Reversible with the correct decryption key |

| Data Relationship | Randomly generated, possessing absolutely no mathematical link | Mathematically transformed and structurally altered |

| Compliance Scope | Significantly reduces the required compliance scope | Data remains entirely in scope for all compliance |

| Speed and Efficiency | Extremely fast for transactional processing tasks | Can sometimes be heavy and resource-intensive |

| Primary Use Cases | Payment processing, API security, and local databases | Secure file transfers, emails, and data in transit |

Choosing between tokenization and encryption depends on specific organizational needs. However, for actively protecting credit card details and API endpoints, tokenization security offers a far superior, uncrackable layer of defense, rendering breaches completely harmless.

Top Tokenization Use Cases, Benefits, and Examples

The global adoption of tokenization tools has grown massively across multiple fast-paced industries over recent years. Organizations continuously leverage tokenization benefits to protect strict consumer privacy, streamline internal operations, and minimize the disastrous impacts of a massive data breach. There are several prominent tokenization use cases that clearly highlight the extreme flexibility of this modern technology.

One of the most common tokenization examples occurs in the retail and e-commerce sector. When a frequent customer saves their credit card information on a popular shopping website for future purchases, the merchant does not store the actual card number. Instead, they securely store a minimal token. If the merchant's customer database is compromised, hackers steal completely useless tokens, perfectly protecting the customer's actual financial assets.

Another massive use case involves the complex healthcare system. Medical facilities handle vast amounts of protected health information daily. By carefully tokenizing vital patient IDs, confidential social security numbers, and sensitive medical record numbers, hospitals securely transmit data across extensive networks without breaking HIPAA regulations.

Clear tokenization benefits critically include:

- Enhanced Security Profile: Cybercriminals simply cannot monetize or exploit plain tokens. Storing blank tokens instead of raw data dramatically lowers the intense risk associated with data theft.

- Cost-Effective Compliance: Tokenization systematically limits the systems that touch sensitive data. This reduces the scope and cost of compliance audits for strict standards like PCI DSS.

- Improved Customer Experience: Dynamic businesses confidently offer seamless checkout experiences, recurring billing, and snappy one-click payments without sacrificing raw security.

- Operational Agility: Tokens preserve the exact format of the original data, meaning they route smoothly through legacy systems and modern APIs without requiring expensive overhauls.

Integrating proper tokenization security directly into your architecture makes sensitive data effectively invisible to attackers. This fundamentally shifts how your entire organization operates, ultimately protecting both your business reputation and your users' unwavering trust.

Apidog: The Ultimate Platform for Designing and Testing APIs with Auth

Implementing powerful tokenization in API security requires highly reliable software to guarantee total success. If you want to build impenetrable digital systems, you fundamentally need a robust platform to carefully design, actively debug, and meticulously test your endpoints. This is exactly where Apidog steps in as the premier, undeniable choice among modern API development and automated testing solutions.

Apidog presents itself as a comprehensive platform purposefully built for API design, documentation, debugging, and responsive mock testing. When building tokenization tools or integrating tokenization services, developers critically need to verify that their active APIs handle generated tokens correctly. Apidog offers an incredibly intuitive visual interface making this exact process completely effortless. You can easily define strict endpoint structures, outline advanced security schemes, and establish necessary request parameters for all tokenized interactions.

With Apidog, you quickly configure complex authentication methods, including standard OAuth 2.0, secure Bearer tokens, and custom API keys. The platform visually maps out exactly how your robust API expects to receive and process a sensitive token, making it an entirely indispensable asset for focused engineering teams worldwide.

Furthermore, Apidog uniquely excels in rapid automated testing. You can create extensive test scenarios to validate that your API correctly exchanges sensitive raw data for secure tokens. The detailed platform natively supports dynamic environment variables, meaning you can easily pass tokens between different API requests during your testing phase. This properly simulates real-world, complex user flows, proving that your secure tokenization logic holds up entirely.

Choosing Apidog immediately means choosing top-tier efficiency and lasting reliability. The application ensures your exact API design perfectly complies with strict OpenAPI specifications, utilizing AI-powered checks to catch errors very early. Use Apidog to design, test, and successfully deploy robust APIs leveraging tokenization best practices. Sign up for Apidog today to quickly streamline your workflow.

Conclusion

Tokenization essentially represents a monumental, critical leap forward in raw data protection and modern cybersecurity. As aggressive cyber threats continuously become far more sophisticated and damaging, traditional security measures like basic encryption simply can no longer stand completely alone. By deeply understanding exactly what is tokenization, smart businesses can actively remove highly sensitive data from their internal computer networks and quickly replace it with entirely harmless, useless placeholders.

Throughout this guide, we explored exactly how tokenization works, highlighted the critical differences between tokenization vs encryption, and summarized the massive tokenization benefits extending across e-commerce, complex healthcare, and global finance. The diverse tokenization use cases discussed actively prove that this advanced method not only thoroughly secures daily operations but also dramatically reduces the high cost and heavy burden of strict regulatory compliance. Consistently incorporating tokenization best practices into your basic infrastructure is no longer an optional luxury; it is a fundamental, urgent requirement for absolutely any organization valuing user privacy and API security.

Moreover, when you firmly decide to implement robust tokenization security, your web APIs act as the primary backbone of that complex integration. They absolutely must be designed flawlessly from the ground up and tested rigorously before deployment. Apidog successfully provides the ultimate, comprehensive solution for this exact technical scenario. Its incredibly powerful suite of API design, highly detailed documentation, and automated testing features uniquely empowers software development teams to quickly build secure and consistently reliable connections. By choosing Apidog as your main tool, you ensure tokenized workflows always operate perfectly.

Take total control of your corporate data security today. Protect your highly sensitive information, fiercely defend your loyal customers, and rapidly build world-class APIs with unshakeable confidence. Download and happily sign up for Apidog right now to fully experience the absolute best API platform available.