Data drives modern business decisions, but only when it’s accurate, complete, and timely. ELT Testing ensures that the data flowing through your pipelines—whether into data lakes, warehouses, or analytics platforms —meets the specified standards. ELT (Extract, Load, Transform) has become the dominant pattern for modern data integration, yet many teams struggle to test it effectively. This guide provides a practical framework for validating ELT pipelines at every stage.

What is ELT and How It Differs from ETL

ELT (Extract, Load, Transform) flips the traditional ETL sequence. Instead of transforming data before loading, you extract raw data from source systems, load it directly into your target (data lake or warehouse), then transform it in-place using the target’s compute power.

| Stage | ETL Pattern | ELT Pattern |

|---|---|---|

| Extract | Pull data from sources | Pull data from sources |

| Transform | Clean/modify in staging | Happens in target system |

| Load | Push transformed data | Push raw data first |

ELT Testing must validate each stage: extraction completeness, loading integrity, and transformation accuracy—all while ensuring performance and data quality.

Why ELT Testing Matters: The Business Impact

Poorly tested ELT pipelines create cascading problems:

- Data Corruption: A single transformation bug can propagate incorrect metrics to executive dashboards, leading to million-dollar misdecisions.

- Compliance Risk: GDPR and HIPAA require you to prove data lineage and accuracy. ELT Testing provides audit trails.

- Performance Degradation: Untested pipelines that process terabytes daily can silently slow down, missing SLA windows.

- Trust Erosion: When business teams discover data quality issues, they stop trusting the analytics platform entirely.

A retail company once discovered that 15% of their sales data was missing from reports because an ELT Testing gap failed to catch a schema change in their source system. The impact: incorrect inventory planning and stockouts during peak season.

How ELT Testing is Performed: A Phase-by-Phase Approach

ELT Testing follows the data journey from source to consumption. Here’s how to validate each phase:

Phase 1: Extraction Testing

Verify that data is completely and accurately pulled from source systems.

Test Cases:

- Completeness: Count records extracted vs. source system

- Schema Validation: Ensure source schema hasn’t changed unexpectedly

- Data Type Correctness: Numbers remain numbers, dates remain dates

- Incremental Extraction: Only new/changed records are pulled

# Extraction completeness test

def test_extraction_completeness():

source_count = source_db.query("SELECT COUNT(*) FROM orders WHERE date = '2024-01-01'")

extracted_count = staging_area.query("SELECT COUNT(*) FROM raw_orders WHERE date = '2024-01-01'")

assert extracted_count == source_count, f"Missing {source_count - extracted_count} records"

Phase 2: Loading Testing

Validate that raw data lands correctly in the target system without corruption.

Test Cases:

- Load Success: All extracted records are loaded

- Data Integrity: No truncation, encoding issues, or corruption

- Partitioning: Data lands in correct partitions/buckets

- Duplicate Detection: No duplicate records introduced

-- Loading integrity test

SELECT

source_table,

COUNT(*) as loaded_records,

SUM(CASE WHEN loaded_at IS NULL THEN 1 ELSE 0 END) as failed_records

FROM raw_data_audit

WHERE load_date = CURRENT_DATE

GROUP BY source_table

HAVING failed_records > 0;

Phase 3: Transformation Testing

Verify that business logic correctly transforms raw data into analytics-ready format.

Test Cases:

- Business Rule Accuracy: Calculations match specifications

- Referential Integrity: Foreign keys resolve correctly

- Data Quality: Null handling, default values, cleansing

- Aggregation Logic: Sums, counts, averages are mathematically correct

-- Transformation accuracy test

SELECT

order_id,

raw_amount,

calculated_tax,

(raw_amount * 0.08) as expected_tax

FROM transformed_orders

WHERE ABS(calculated_tax - (raw_amount * 0.08)) > 0.01

Phase 4: End-to-End Validation

Run the entire pipeline and validate final outputs against business expectations.

Test Cases:

- Report Accuracy: Final dashboards show correct KPIs

- Reconciliation: Aggregated totals match source system

- Timeline: Data freshness meets SLA (e.g., within 2 hours)

- Downstream Impact: BI tools can query transformed data without errors

ELT Testing vs Traditional Data Testing

ELT Testing differs from traditional data warehouse testing in key ways:

| Aspect | Traditional ETL Testing | ELT Testing |

|---|---|---|

| Test Location | Staging layer | Target system (Snowflake, BigQuery) |

| Performance Focus | Transformation engine | Target compute efficiency |

| Schema Changes | Handled in ETL tool | Tested in target system |

| Tools | ETL-native testers | SQL-based + API-based tools |

Modern ELT Testing requires you to validate SQL transformations inside cloud warehouses, monitor API data ingestion endpoints, and track data lineage across schema-on-read architectures.

Tools for ELT Testing

SQL-Based Testing:

- dbt (data build tool) with built-in tests

- Great Expectations for data quality

- custom SQL scripts in target warehouse

API-Based Testing (Critical for ELT):

- Apidog for ingestion API validation and ad-hoc API checks

- custom scripts for webhook monitoring

Orchestration Testing:

- Apache Airflow task validation

- Prefect flow testing

How Apidog Helps with ELT Testing

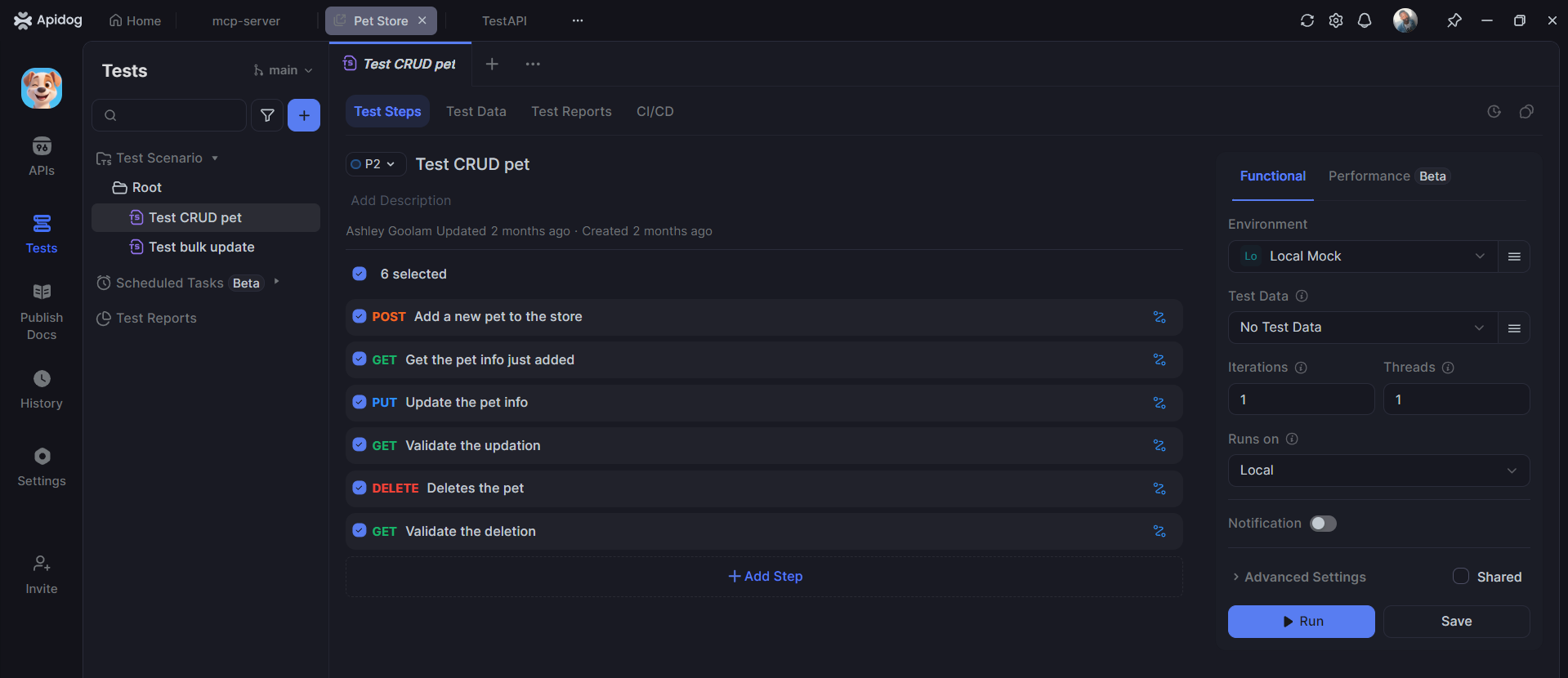

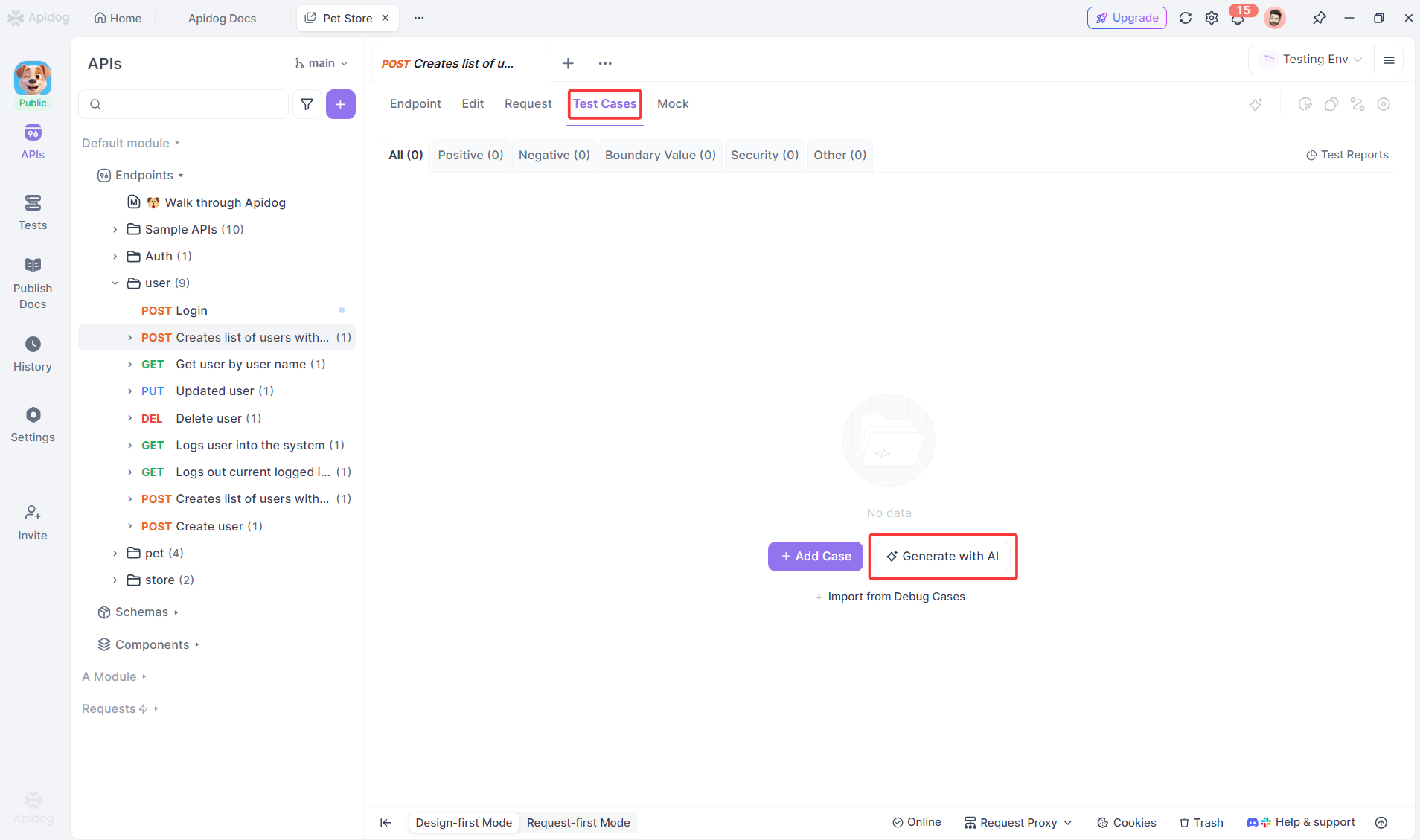

While SQL tools handle transformations, Apidog excels at testing the API layer of ELT pipelines—critical for modern data ingestion and monitoring.

Testing Data Ingestion APIs

Most ELT pipelines use APIs to extract data. Apidog automates validation of these endpoints:

# Apidog test for data ingestion API

Test: POST /api/v1/extract/orders

Given: Valid API key and date range

When: Request sent with parameters {"start_date": "2024-01-01", "end_date": "2024-01-31"}

Test 1: Response status 202 (accepted for processing)

Test 2: Response contains job_id for tracking

Test 3: Webhook notification received within 5 minutes

Test 4: Data appears in staging table

Apidog’s advantages for ELT Testing:

- Automatic test generation from OpenAPI specs

- Visual workflow builder for complex pipelines

- Environment management for dev/staging/prod data warehouses

- CI/CD integration to run data quality checks on schedule

- Detailed logging for audit trails

Best Practices for ELT Testing

- Test incrementally: Validate extraction before loading, load before transforming

- Monitor continuously: Run data quality checks every hour, not just once

- Version control tests: Store SQL tests in Git alongside transformation code

- Test in production-like environment: Use production data volume in staging

- Automate reconciliation: Compare source and target counts automatically

- Alert on anomalies: Notify when row counts deviate >5% from historical average

- Document data lineage: Track how each field transforms from raw to final

Frequently Asked Questions

Q1: How often should we run ELT tests?

Ans: Extraction tests run with every pipeline execution. Data quality tests run continuously (hourly). Full end-to-end validation runs at least once daily.

Q2: Who is responsible for ELT Testing—data engineers or QA?

Ans: Data engineers own the tests because they understand the transformations. QA provides frameworks and validates business logic outcomes.

Q3: Can Apidog replace SQL-based ELT testing?

Ans: No. Apidog complements SQL testing by validating the API layer (ingestion, monitoring, orchestration). You still need SQL tests for transformations inside the warehouse.

Q4: How do we test ELT pipelines that process terabytes of data?

Ans: Test on a statistically significant sample (e.g., 1% of data) rather than full volume. Use data profiling to verify distributions match expectations.

Q5: What’s the most critical ELT test to implement first?

Ans: End-to-end row count reconciliation. If source and destination record counts don’t match, nothing else matters. This test catches the majority of pipeline failures.

Conclusion

ELT Testing is non-negotiable for data-driven organizations. Unlike traditional software testing where bugs affect features, data pipeline bugs affect business decisions, compliance, and revenue. A systematic approach—testing extraction, loading, transformation, and end-to-end flows—prevents costly data corruption and builds trust in your analytics platform.

Modern ELT pipelines rely heavily on APIs for ingestion and monitoring. Apidog automates the tedious work of testing these APIs, letting data engineers focus on transformation logic while ensuring the pipeline’s entry and exit points are validated continuously. The combination of SQL-based transformation testing and Apidog’s API automation creates a comprehensive safety net for your most critical business asset: data.

Start with reconciliation testing. Add data quality checks. Automate API validation. Your future self—and your business stakeholders—will thank you when the board presentation shows accurate numbers.