Looking to standardize how your AI models interact with external tools and APIs? The Model Context Protocol (MCP) offers a unified protocol, but integrating MCP-compliant tools into advanced agent frameworks like LangChain and LangGraph can be challenging—until now.

In this guide, you'll learn how to bridge MCP tools into the LangChain ecosystem using the langchain-mcp-adapters library. Whether you're building robust AI agents, orchestrating multiple tool servers, or scaling modular AI applications, this article provides a practical, step-by-step approach tailored for API developers, backend engineers, and technical leads.

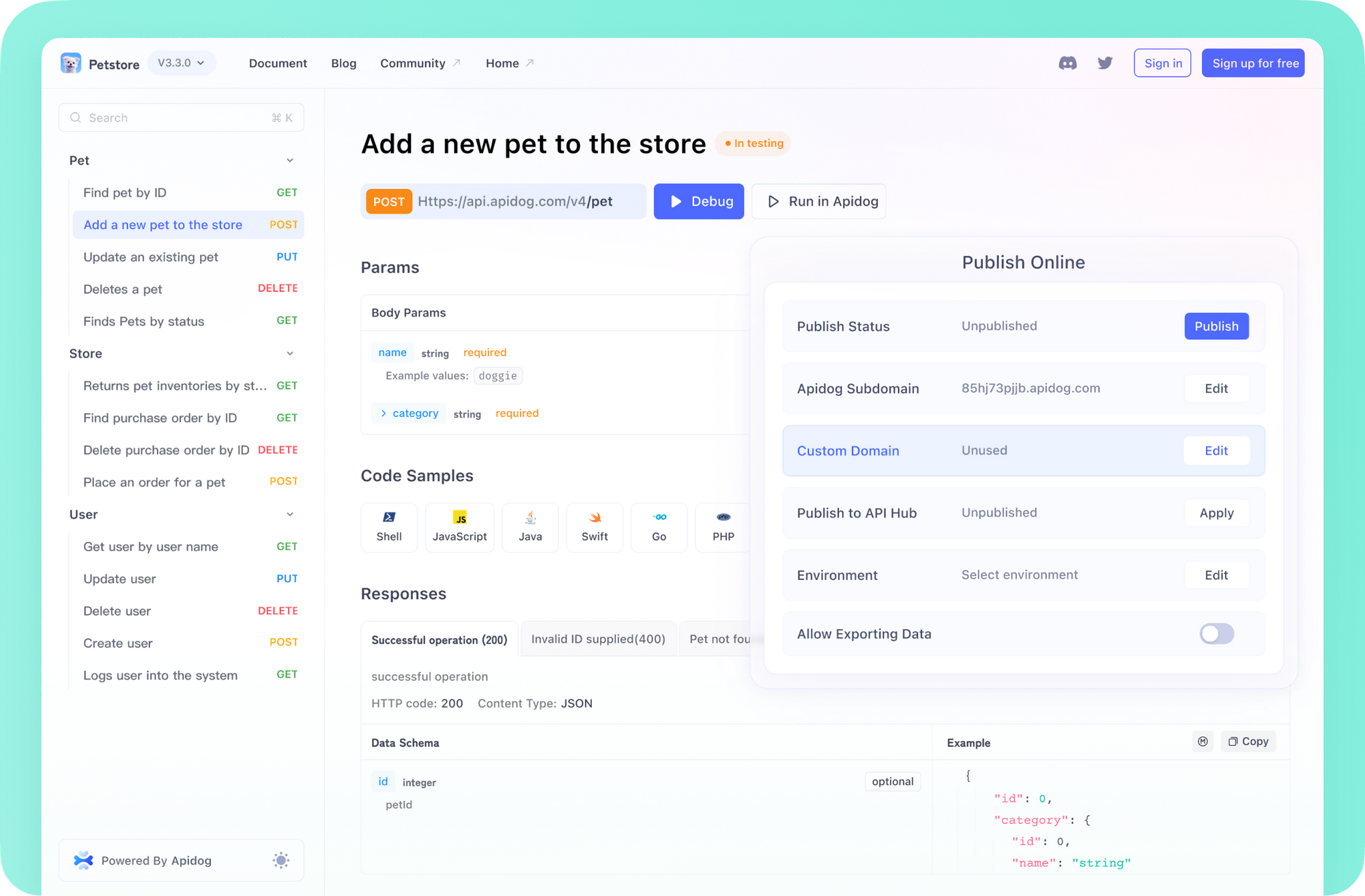

💡 Looking for a streamlined API testing solution that generates beautiful API Documentation, maximizes team productivity, and offers a cost-effective alternative to Postman? Try Apidog — an all-in-one platform designed for API-focused teams. See how Apidog can transform your workflow.

Why Integrate MCP Tools with LangChain?

As AI-powered applications become more modular, there's a growing need to connect models with diverse external tools. MCP provides a standard interface for this, but out-of-the-box support in LangChain and LangGraph is limited.

The langchain-mcp-adapters library solves this by:

- Translating MCP tools into LangChain-compatible objects

- Supporting multiple servers and transport methods

- Simplifying tool aggregation for advanced agent workflows

This approach enables you to combine specialized services (math, weather, APIs, etc.) in a scalable, maintainable architecture.

Key Features of langchain-mcp-adapters

- Automatic MCP Tool Conversion: Instantly wraps MCP tools as LangChain

BaseToolobjects for agent compatibility. - Multi-Server Support: The

MultiServerMCPClientlets you connect to and aggregate tools from multiple MCP servers. - Transport Flexibility: Supports both

stdio(simple subprocess communication) andsse(Server-Sent Events over HTTP).

Understanding MCP Servers and Clients

Before diving into code, let's clarify the architecture:

What is an MCP Server?

- Purpose: Exposes tools (functions) for AI models to call.

- Implementation: Commonly built in Python using the

mcplibrary andFastMCP. - Defining Tools: Use the

@mcp.tool()decorator for automatic input schema generation. - Defining Prompts: Use

@mcp.prompt()for structured conversational starters. - Transports:

stdio: Runs as a subprocess, communicates via standard input/output.sse: Runs as an HTTP web server, enabling remote or distributed connectivity.

What is an MCP Client?

- Role: Connects to one or more MCP servers, manages communication, and fetches available tools and prompts.

- MultiServerMCPClient: Handles multiple connections and aggregates tool definitions for use in LangChain agents.

Installing the Required Packages

Start by installing the necessary libraries:

pip install langchain-mcp-adapters langgraph langchain-openai

Remember to set your language model provider's API key:

export OPENAI_API_KEY=<your_openai_api_key>

# Or for other providers:

# export ANTHROPIC_API_KEY=<...>

Example: Building and Connecting to a Single MCP Server

Let's walk through a simple use case: exposing math functions via MCP, then connecting and using those tools in a LangChain agent.

Step 1: Create an MCP Math Server (math_server.py)

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("Math")

@mcp.tool()

def add(a: int, b: int) -> int:

"""Add two numbers"""

print(f"Executing add({a}, {b})")

return a + b

@mcp.tool()

def multiply(a: int, b: int) -> int:

"""Multiply two numbers"""

print(f"Executing multiply({a}, {b})")

return a * b

@mcp.prompt()

def configure_assistant(skills: str) -> list[dict]:

"""Configures the assistant with specified skills."""

return [{

"role": "assistant",

"content": f"You are a helpful assistant. You have the following skills: {skills}. Always use only one tool at a time.",

}]

if __name__ == "__main__":

print("Starting Math MCP server via stdio...")

mcp.run(transport="stdio")

Save this file as math_server.py.

Step 2: Create the LangChain Client and Agent (client_app.py)

import asyncio

import os

from mcp import ClientSession, StdioServerParameters

from mcp.client.stdio import stdio_client

from langchain_mcp_adapters.tools import load_mcp_tools

from langgraph.prebuilt import create_react_agent

from langchain_openai import ChatOpenAI

current_dir = os.path.dirname(os.path.abspath(__file__))

math_server_script_path = os.path.join(current_dir, "math_server.py")

async def main():

model = ChatOpenAI(model="gpt-4o")

server_params = StdioServerParameters(

command="python",

args=[math_server_script_path],

)

print("Connecting to MCP server...")

async with stdio_client(server_params) as (read, write):

async with ClientSession(read, write) as session:

await session.initialize()

print("Session initialized.")

print("Loading MCP tools...")

tools = await load_mcp_tools(session)

print(f"Loaded tools: {[tool.name for tool in tools]}")

agent = create_react_agent(model, tools)

print("Invoking agent...")

inputs = {"messages": [("human", "what's (3 + 5) * 12?")]}

async for event in agent.astream_events(inputs, version="v1"):

print(event)

if __name__ == "__main__":

asyncio.run(main())

Save as client_app.py in the same directory.

To run:

python client_app.py

The client will start the math server as a subprocess, connect, load tools, and solve math queries via agent calls.

Advanced: Aggregating Tools from Multiple MCP Servers

LangChain agents shine when empowered with multiple specialized toolkits. Using MultiServerMCPClient, you can combine math, weather, and any other MCP-compliant tools—even across different transports.

Step 1: Create an MCP Weather Server (weather_server.py)

from mcp.server.fastmcp import FastMCP

import uvicorn

mcp = FastMCP("Weather")

@mcp.tool()

async def get_weather(location: str) -> str:

"""Get weather for location."""

print(f"Executing get_weather({location})")

return f"It's always sunny in {location}"

if __name__ == "__main__":

print("Starting Weather MCP server via SSE on port 8000...")

mcp.run(transport="sse", host="0.0.0.0", port=8000)

Save as weather_server.py and run in a separate terminal:

python weather_server.py

Step 2: Create a Multi-Server Client (multi_client_app.py)

import asyncio

import os

from langchain_mcp_adapters.client import MultiServerMCPClient

from langgraph.prebuilt import create_react_agent

from langchain_openai import ChatOpenAI

current_dir = os.path.dirname(os.path.abspath(__file__))

math_server_script_path = os.path.join(current_dir, "math_server.py")

async def main():

model = ChatOpenAI(model="gpt-4o")

server_connections = {

"math_service": {

"transport": "stdio",

"command": "python",

"args": [math_server_script_path],

},

"weather_service": {

"transport": "sse",

"url": "http://localhost:8000/sse",

}

}

print("Connecting to multiple MCP servers...")

async with MultiServerMCPClient(server_connections) as client:

print("Connections established.")

all_tools = client.get_tools()

print(f"Loaded tools: {[tool.name for tool in all_tools]}")

agent = create_react_agent(model, all_tools)

print("\nInvoking agent for math query...")

math_inputs = {"messages": [("human", "what's (3 + 5) * 12?")]}

math_response = await agent.ainvoke(math_inputs)

print("Math Response:", math_response['messages'][-1].content)

print("\nInvoking agent for weather query...")

weather_inputs = {"messages": [("human", "what is the weather in nyc?")]}

weather_response = await agent.ainvoke(weather_inputs)

print("Weather Response:", weather_response['messages'][-1].content)

if __name__ == "__main__":

asyncio.run(main())

To run:

- Start the weather server:

python weather_server.py - Run the multi-client app:

python multi_client_app.py

The client will spin up the math server via stdio, connect to the weather server over SSE, and aggregate all tools for the agent.

Deploying LangChain Agents with MCP Tools via LangGraph API Server

You can deploy your agent as a persistent API using LangGraph's deployment features. Here’s how to manage tool lifecycles with the agent.

Example: graph.py

from contextlib import asynccontextmanager

import os

from langchain_mcp_adapters.client import MultiServerMCPClient

from langgraph.prebuilt import create_react_agent

from langchain_openai import ChatOpenAI

math_server_script_path = os.path.abspath("math_server.py")

server_connections = {

"math_service": {

"transport": "stdio",

"command": "python",

"args": [math_server_script_path],

},

"weather_service": {

"transport": "sse",

"url": "http://localhost:8000/sse",

}

}

model = ChatOpenAI(model="gpt-4o")

@asynccontextmanager

async def lifespan(_app):

async with MultiServerMCPClient(server_connections) as client:

print("MCP Client initialized within lifespan.")

agent = create_react_agent(model, client.get_tools())

yield {"agent": agent}

Configure your langgraph.json:

{

"dependencies": ["."],

"graphs": {

"my_mcp_agent": {

"entrypoint": "graph:agent",

"lifespan": "graph:lifespan"

}

}

}

When you run langgraph up, your agent will be served with access to all connected MCP tools.

Choosing Between stdio and SSE Transports

stdio

- Ideal for: Local development, single-language (Python) toolkits, or when the client manages the server's lifecycle.

- Notes: No networking; runs as a subprocess.

sse

- Ideal for: Distributed, production, or cross-language tool servers.

- Notes: Requires separate server process; connects via HTTP.

Select the transport based on your team's deployment and scaling needs.

Advanced Configuration Options

Both StdioConnection and SSEConnection dictionaries in MultiServerMCPClient can be customized:

- Stdio:

env,cwd,encoding,session_kwargs, etc. - SSE:

headers,timeout,sse_read_timeout,session_kwargs, etc.

Refer to langchain_mcp_adapters/client.py for full API details.

Conclusion

The langchain-mcp-adapters library empowers developers to connect diverse, MCP-compliant tool servers into LangChain and LangGraph agents with ease. By supporting multi-server aggregation, automatic tool conversion, and flexible transport options, you can build modular, API-driven AI applications that scale.

Workflow Recap:

- Define tools and prompts in MCP servers.

- Configure the

MultiServerMCPClientwith your server details. - Connect and fetch tools using the client context.

- Pass the tools to your LangChain or LangGraph agent.

Ready to accelerate your API-driven AI development? Explore the repository examples, and see how Apidog can streamline your team's API lifecycle—from design to documentation and testing.