TL;DR

The Seedance 2.0 API launched on April 2, 2026, through Volcengine Ark. You submit a video generation task with a POST request, then poll a GET endpoint until the status reaches "succeeded." The API supports text-to-video, image-to-video, first-and-last-frame control, multimodal references, and native audio generation. A 5-second 1080p video costs roughly $0.93. Download the video within 24 hours. The URL expires after that.

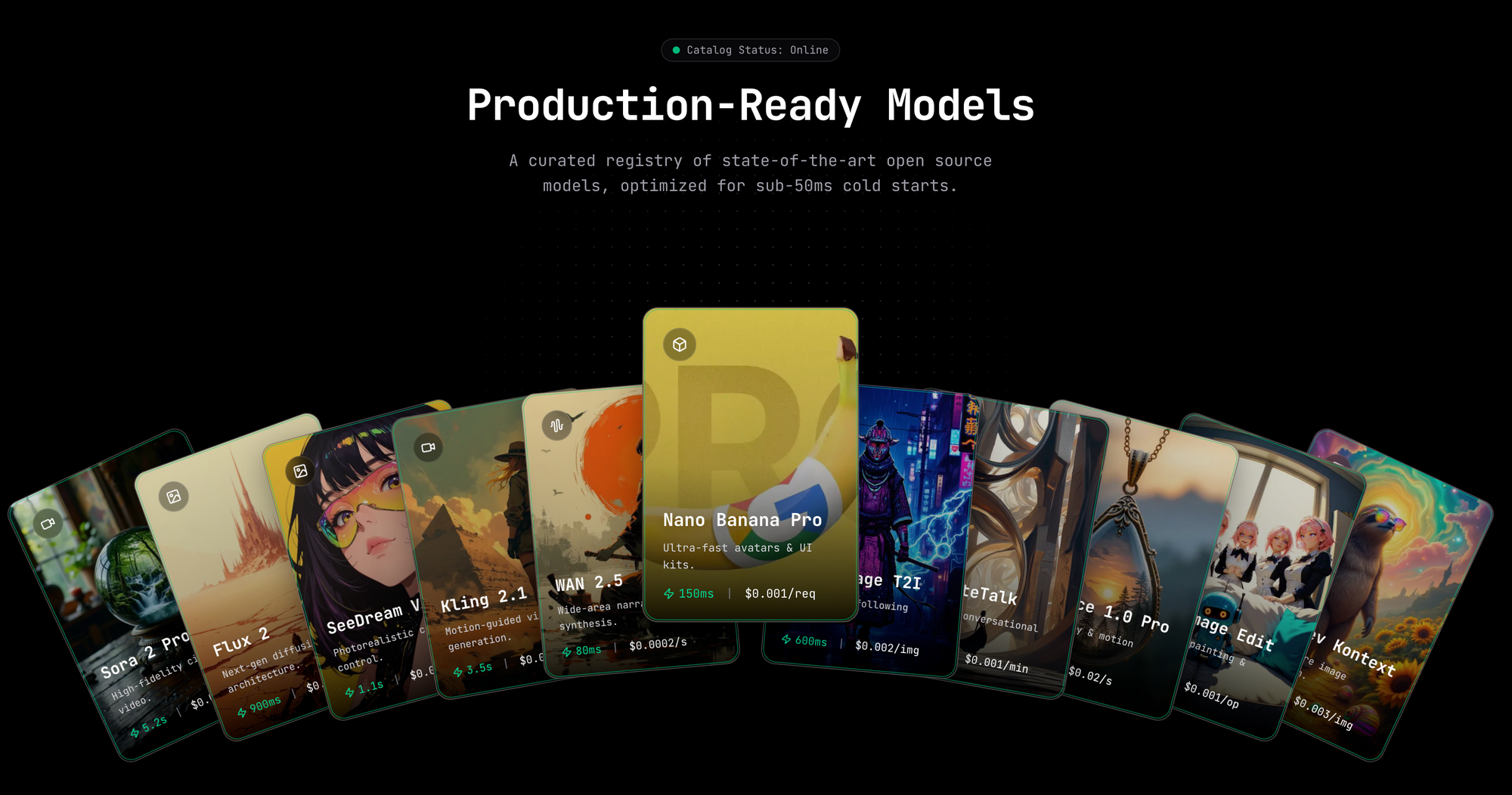

Want to Use Seedance 2.0 API? Want to Use Nanobanana API at 40% OFF? Try the Ultimate AI API Platform for All AI Meida APIs: Hypereal AI !

Introduction

On April 2, 2026, ByteDance's Volcengine Ark platform released the official Seedance 2.0 API. Before that date, the only way to generate Seedance 2.0 videos was through the web console. If you've seen tutorials showing a UI walkthrough, those were written for the console. This guide covers the real API that developers can call programmatically.

This article covers every supported input type, the pricing math from the response token count, and the errors you'll hit in production.

What is Seedance 2.0?

Seedance 2.0 is a video generation model from ByteDance. It runs on Volcengine Ark under the model IDs doubao-seedance-2-0-260128 (standard) and doubao-seedance-2-0-fast-260128 (faster, lower quality).

The model supports more input types than version 1.5 did. Version 1.5 handled text-to-video and image-to-video. Version 2.0 adds:

- First-and-last-frame control (you supply both bookend images)

- Multimodal reference inputs: images, video clips, and audio files combined in one request

- Native audio generation, including dialogue, sound effects, ambient sound, and music

- Lip sync in over 8 languages

- Camera motion control through natural language prompts (dolly, tracking, crane shots)

- Output up to 15 seconds long at up to 2K resolution

The model outputs 24 fps video at aspect ratios from 1:1 to 21:9. You pick the resolution at request time.

What changed: guide vs official API

Earlier articles about Seedance 2.0, including a February 2026 guide on this site, described the Seedance 2.0 web console on Volcengine. No API existed at that point. Those guides walked through filling in a prompt field on a webpage and clicking a generate button.

The April 2, 2026 API release changes that entirely. You can now call the API from any language, automate video generation pipelines, and integrate Seedance into your own product. This guide supersedes the UI walkthrough for any developer use case.

Prerequisites

You need a Volcengine account to get started. Create one at volcengine.com. Once your account is active, go to the Ark console at:

https://console.volcengine.com/ark/region:ark+cn-beijing/apikey

Generate an API key there. Then export it as an environment variable:

export ARK_API_KEY="your-api-key-here"

Every request to the API uses this key in a Bearer token header:

Authorization: Bearer YOUR_ARK_API_KEY

New accounts receive free trial credits. These cover roughly 8 full 15-second generations at 1080p before you pay anything.

Text-to-video: your first request

The base URL for all Seedance API calls is:

https://ark.cn-beijing.volces.com/api/v3

To submit a text-to-video task, POST to /v1/contents/generations/tasks.

cURL example

curl -X POST "https://ark.cn-beijing.volces.com/api/v3/contents/generations/tasks" \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $ARK_API_KEY" \

-d '{

"model": "doubao-seedance-2-0-260128",

"content": [

{

"type": "text",

"text": "A golden retriever running through a sunlit wheat field, wide tracking shot, cinematic"

}

],

"resolution": "1080p",

"ratio": "16:9",

"duration": 5,

"watermark": false

}'

The API returns a task ID immediately:

{"id": "cgt-2025xxxxxxxx-xxxx"}

Python example (official SDK)

Install the SDK first:

pip install volcenginesdkarkruntime

Then submit a task:

import os

from volcenginesdkarkruntime import Ark

client = Ark(api_key=os.environ.get("ARK_API_KEY"))

resp = client.content_generation.tasks.create(

model="doubao-seedance-2-0-260128",

content=[

{

"type": "text",

"text": "A golden retriever running through a sunlit wheat field, wide tracking shot, cinematic"

}

],

resolution="1080p",

ratio="16:9",

duration=5,

watermark=False,

)

print(resp.id)

Store the task ID. You'll need it for the polling step.

The async task pattern: submit, poll, download

Seedance generation is not instant. A 5-second 1080p video typically takes 60 to 120 seconds. The API handles this with an async task lifecycle:

queued -> running -> succeeded

-> failed

-> expired

-> cancelled

You poll the GET endpoint until the status changes from queued or running.

Full Python polling loop

import os

import time

import requests

from volcenginesdkarkruntime import Ark

client = Ark(api_key=os.environ.get("ARK_API_KEY"))

# Step 1: submit

resp = client.content_generation.tasks.create(

model="doubao-seedance-2-0-260128",

content=[

{"type": "text", "text": "Aerial shot of a mountain lake at sunrise, slow dolly forward"}

],

resolution="1080p",

ratio="16:9",

duration=5,

watermark=False,

)

task_id = resp.id

print(f"Task submitted: {task_id}")

# Step 2: poll with exponential backoff

wait = 10

while True:

result = client.content_generation.tasks.get(task_id=task_id)

status = result.status

print(f"Status: {status}")

if status == "succeeded":

video_url = result.content.video_url

print(f"Video URL: {video_url}")

break

elif status in ("failed", "expired", "cancelled"):

print(f"Task ended with status: {status}")

break

time.sleep(wait)

wait = min(wait * 2, 60) # cap at 60 seconds

# Step 3: download immediately

if status == "succeeded":

response = requests.get(video_url, stream=True)

with open("output.mp4", "wb") as f:

for chunk in response.iter_content(chunk_size=8192):

f.write(chunk)

print("Downloaded: output.mp4")

The exponential backoff prevents hammering the API. The cap at 60 seconds keeps polling frequent enough for practical use.

Image-to-video (I2V): animating a still image

To animate an image, add an image_url object to the content array alongside your text prompt. The image becomes the first frame of the video.

resp = client.content_generation.tasks.create(

model="doubao-seedance-2-0-260128",

content=[

{

"type": "text",

"text": "The woman slowly turns her head and smiles at the camera"

},

{

"type": "image_url",

"image_url": {"url": "https://example.com/portrait.jpg"}

}

],

ratio="adaptive",

duration=5,

watermark=False,

)

Setting ratio to "adaptive" tells the model to use the input image's native aspect ratio. This avoids unwanted cropping or letterboxing.

Each image must be under 30MB. You can supply up to 9 images in a single request.

First and last frame: controlling start and end points

Seedance 2.0 supports bookend frame control. You supply the first frame image, the last frame image, and a text prompt. The model generates the in-between motion.

This is useful for product transitions, morphing effects, or any sequence where you know your start and end states.

resp = client.content_generation.tasks.create(

model="doubao-seedance-2-0-260128",

content=[

{

"type": "text",

"text": "The flower blooms from bud to full open, macro lens, soft light"

},

{

"type": "image_url",

"image_url": {"url": "https://example.com/flower-bud.jpg"}

},

{

"type": "image_url",

"image_url": {"url": "https://example.com/flower-open.jpg"}

}

],

ratio="adaptive",

duration=8,

watermark=False,

)

The model infers that two images mean first-and-last-frame mode when a text prompt is also present. Include both images in order: first frame first, last frame second.

You can also use return_last_frame: true when generating a clip. This returns an image of the final frame alongside the video URL. Feed that image as the first frame of your next request to chain multiple clips into a longer sequence.

Multimodal reference: combining images, video, and audio

One of the strongest additions in Seedance 2.0 is accepting video and audio as reference inputs in the same request as images and text.

The content array can hold:

{"type": "text", "text": "..."}for the prompt{"type": "image_url", "image_url": {"url": "..."}}for images{"type": "video_url", "video_url": {"url": "..."}}for video references{"type": "audio_url", "audio_url": {"url": "..."}}for audio references

Limits per request:

- Up to 9 images (max 30MB each)

- Up to 3 video clips (2 to 15 seconds each, max 50MB each)

- Up to 3 audio files (MP3, max 15MB each)

A combined reference example:

resp = client.content_generation.tasks.create(

model="doubao-seedance-2-0-260128",

content=[

{

"type": "text",

"text": "Match the visual style of the reference clip and add the provided background audio"

},

{

"type": "image_url",

"image_url": {"url": "https://example.com/style-reference.jpg"}

},

{

"type": "video_url",

"video_url": {"url": "https://example.com/motion-reference.mp4"}

},

{

"type": "audio_url",

"audio_url": {"url": "https://example.com/background-music.mp3"}

}

],

duration=10,

ratio="16:9",

watermark=False,

)

When you include a video reference, the billing rate drops to the V2V tier: approximately $3.90 per million tokens instead of $6.40.

Native audio generation

Set generate_audio: true to have Seedance generate an audio track alongside the video. The model performs joint audio-video generation, so the sounds match the on-screen action rather than being layered on afterward.

Audio generation covers dialogue, sound effects, ambient noise, and background music. Lip sync works in over 8 languages.

resp = client.content_generation.tasks.create(

model="doubao-seedance-2-0-260128",

content=[

{

"type": "text",

"text": "A street musician plays guitar outside a cafe in Paris, crowds passing by, city sounds"

}

],

resolution="1080p",

ratio="16:9",

duration=10,

generate_audio=True,

watermark=False,

)

Native audio generation increases token consumption slightly compared to silent video. Factor this into your cost estimates.

Controlling resolution, ratio, and duration

Three parameters shape the output:

resolution accepts "480p", "720p", "1080p", or "2K". The default is "1080p". Higher resolution means more tokens consumed and higher cost.

ratio accepts "16:9", "9:16", "4:3", "3:4", "21:9", "1:1", or "adaptive". Use "adaptive" when your input image has an unusual aspect ratio. The model reads the image dimensions and sets ratio accordingly.

duration accepts integers from 4 to 15. The unit is seconds. The default is 5. Longer videos cost proportionally more.

The fast model (doubao-seedance-2-0-fast-260128) generates at lower quality but finishes faster. Use it for prototyping or when you're iterating on prompts. Switch to the standard model for production output.

When to pick Seedance 2.0 over other video APIs: choose Seedance when you need native audio-video joint generation, bookend frame control, or multimodal reference inputs. If you only need simple text-to-video and cost is the priority, the fast model at 480p is the cheapest option in this class.

Reading the cost from the response

After a task succeeds, the response includes a usage field:

{

"usage": {

"completion_tokens": 246840,

"total_tokens": 246840

}

}

Token consumption correlates with video length and resolution. A reference point from the official docs: a 15-second 1080p video consumes approximately 308,880 tokens. A 5-second 1080p video uses roughly 102,960 tokens.

Pricing for T2V and I2V at 1080p is 46 yuan per million tokens (about $6.40 per million tokens at current exchange rates).

Quick estimates:

- 5-second 1080p video: approximately 102,960 tokens = about 0.47 yuan = roughly $0.93

- 10-second 1080p video: approximately 205,920 tokens = about 9.47 yuan = roughly $1.97

For V2V tasks (requests that include a video reference), the rate drops to 28 yuan per million tokens (about $3.90 per million tokens).

You can check the exact token count on every response and build cost tracking into your application. Multiply completion_tokens by the rate for your task type.

Important: download the video within 24 hours

The video_url in a succeeded response points to Volcengine object storage. That URL expires 24 hours after the task succeeds. After that, the URL returns a 403 error and the file is gone.

Always download the file to your own storage immediately after the status changes to succeeded. The polling loop in the earlier section includes this download step as part of the standard flow.

The execution_expires_after field confirms the expiry window in seconds. 172800 means 48 hours for the task record itself. The video URL still expires at 24 hours regardless. Trust the 24-hour rule.

Task history is also limited to the last 7 days. You cannot query tasks older than that.

How to test the Seedance API with Apidog

The async task pattern has two API calls that depend on each other. You can't write a single-request test for it. Apidog's Test Scenarios handle this with a chained flow.

Here's the exact setup:

Step 1: Create a Test Scenario

In Apidog, go to the Tests module and create a new scenario called "Seedance 2.0 video generation." Set your environment variable ARK_API_KEY in Apidog's environment settings. Use {{ARK_API_KEY}} anywhere you'd reference the key.

Step 2: Add the submit request

Add a custom POST request step to https://ark.cn-beijing.volces.com/api/v3/contents/generations/tasks. Set the Authorization header to Bearer {{ARK_API_KEY}}. Add your JSON body with the model and content fields.

After this step, add an Extract Variable processor. Set it to extract from the response body using the JSONPath expression $.id. Save the value to an environment variable called TASK_ID.

Step 3: Add a Wait processor

Insert a Wait processor after the extraction step. Set the delay to 30 seconds. This gives the model time to start processing before your first poll attempt.

Step 4: Add the poll request in a For loop

Add a For loop control block with a maximum of 20 iterations. Inside the loop:

- Add a GET request step to

https://ark.cn-beijing.volces.com/api/v3/contents/generations/tasks/{{TASK_ID}}with the same Authorization header. - Add a Wait processor with a 10-second delay after the GET request.

- Set the loop's Break If condition:

$.status == "succeeded"or$.status == "failed".

Step 5: Add assertions

After the loop exits, add an Assertion processor that checks:

$.statusequals"succeeded"$.content.video_urlis not empty

Run the scenario and Apidog generates a full test report showing each step, the extracted task ID, all poll responses, and whether the final assertions passed.

You can also import the Seedance endpoints directly from a cURL command into the test scenario steps. This approach works well when you want to quickly add the submit and poll requests without manually entering every header and parameter.

Pricing breakdown: what a 10-second video costs

The Seedance API uses pay-as-you-go token pricing. There are no monthly seats or credits to manage beyond the initial trial balance.

| Task type | Rate (per 1M tokens) |

|---|---|

| T2V / I2V at 1080p | 46 yuan (~$6.40) |

| V2V (video reference input) | 28 yuan (~$3.90) |

Approximate costs for common durations at 1080p:

| Duration | Approx tokens | Cost (T2V/I2V) |

|---|---|---|

| 5 seconds | ~103,000 | ~$0.66 yuan / ~$0.93 |

| 10 seconds | ~206,000 | ~$9.48 yuan / ~$1.32 |

| 15 seconds | ~309,000 | ~$14.21 yuan / ~$1.97 |

New accounts start with free trial credits covering around 8 full 15-second generations. Use this allowance to experiment with prompts and settings before committing to a production workload.

Lower resolution significantly reduces token consumption. A 480p video at the same duration costs considerably less than 1080p. Start development at 720p, then upgrade resolution only for your final output.

Common errors and fixes

429 Too Many Requests

This means you've hit the concurrency limit, not a rate limit on requests per minute. Too many tasks are running at the same time. Use exponential backoff when you see this status code. Start with a 10-second wait and double it on each retry, capping at 60 seconds. The polling loop shown earlier includes this pattern.

status: "failed"

A failed task means the model could not generate the video. Common causes: the prompt contained content that violated safety filters, the input image was corrupted or too large, or the combination of parameters was invalid. Check your input files and prompt, then resubmit.

status: "expired"

A task expires if it stays in the queue too long without completing. This can happen during peak load periods. Resubmit the task. There's no way to restart an expired task.

403 on video_url

The URL has expired. The 24-hour window passed before you downloaded the file. The task record may still exist in the API for up to 7 days, but the video file is gone. You'll need to regenerate it using the same parameters and seed value if you saved it.

Seed reproducibility

If you saved the seed value from a previous response, pass it back in the next request with the same parameters. The model will attempt to reproduce the same output. This is useful for regenerating expired videos with identical results.

Conclusion

The Seedance 2.0 API gives you programmatic access to one of the most capable video generation models available today. The async task pattern is straightforward: POST to create a task, poll until succeeded, download immediately. The multimodal inputs, native audio generation, and bookend frame control make it possible to build video workflows that were impossible from a web console.

Set up your test coverage in Apidog before you go to production. The Test Scenario chain catches broken polling logic, missing extraction steps, and URL expiry issues before they affect real users.

FAQ

Q: What's the difference between doubao-seedance-2-0-260128 and doubao-seedance-2-0-fast-260128?

The standard model produces higher quality output and is the default for production. The fast model completes jobs more quickly at lower visual quality. Use the fast model when iterating on prompts and switch to standard for final renders.

Q: Can I use Seedance 2.0 outside China?

The API endpoint is hosted in the Beijing region. Developers outside China can call it, but latency will be higher. Check Volcengine's terms of service for any geographic restrictions on your account type.

Q: How do I chain multiple clips into a longer video?

Set return_last_frame: true on each generation. The response will include an image of the last frame alongside the video URL. Pass that image as the first frame of your next request. Repeat until you have all the clips you need, then stitch them using a video editing library.

Q: Does native audio generation cost more?

Native audio generation increases token consumption slightly because the model runs joint audio-video generation rather than video-only. Expect a modest increase in completion_tokens compared to the same request without generate_audio: true.

Q: Can I set a webhook instead of polling?

Yes. Pass a callback_url parameter in your submit request. The API will POST the completed task result to that URL when the status changes. This is more efficient than polling for high-volume pipelines.

Q: What happens if I exceed the 9-image limit?

The API returns a 400 validation error before the task is created. Reduce the number of images in your content array to 9 or fewer.

Q: Is the seed parameter guaranteed to reproduce the exact same video?

The seed parameter makes outputs more reproducible. Exact reproduction is not guaranteed if parameters differ or server-side model versions change. It's the closest approximation available.

Q: How do I track spending across multiple tasks?

Read the completion_tokens field from each task's response and multiply by your tier's token rate. Log these values to a database for cost tracking. There's no built-in spending dashboard in the API, so build your own tracking from the start.