The latest advancements in artificial intelligence are transforming how developers and API teams approach complex problem-solving. OpenAI’s newly released o3 and o4-mini models set a new standard in reasoning, multimodal tool use, and API integration—making them highly relevant for API developers, backend engineers, and technical leads who demand robust, versatile, and scalable AI solutions.

If you’re evaluating which OpenAI model best fits your workflow—or how to leverage these models for smarter API automation and testing—this guide breaks down their core features, benchmarks, pricing, and real-world use cases.

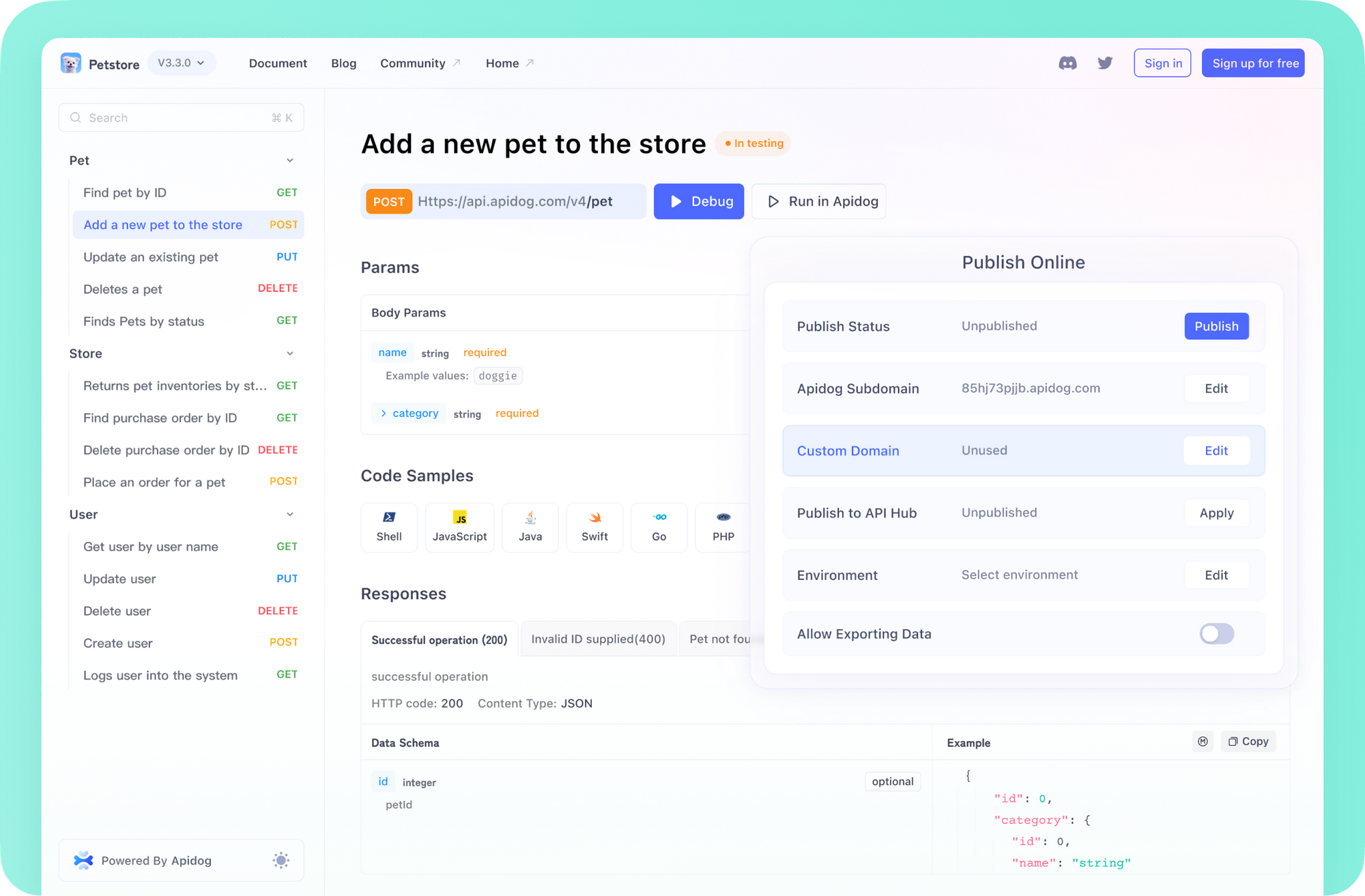

💡 Looking for an API testing tool that creates beautiful API documentation? Want an all-in-one platform to boost your developer team's productivity? Apidog delivers both—replacing Postman at a more affordable price!

What’s New in OpenAI o3 and o4-mini?

OpenAI’s o3 and o4-mini models replace earlier versions (o1, o3-mini, o3-mini-high), offering significant improvements in integrated reasoning and multimodal tool use. Unlike previous generations, these models don’t just process text—they think and act using a diverse set of tools, including:

- Web Search: Pull and synthesize live data from the internet

- Python Execution: Run code for calculations or data analysis

- Image Analysis: Extract and interpret information from images

- File Interpretation: Analyze various document types

- Image Generation: Create new visuals from prompts

For the first time, these capabilities are agentically integrated. That means the model can combine tools within a single task—for example, analyzing spreadsheet data, cross-referencing online articles, running calculations, and generating a visual summary, all in one workflow.

Why Does This Matter for API Teams?

- End-to-end automation: Automate documentation, generate test cases, or analyze logs using both textual and visual data.

- Complex chain-of-thought reasoning: Tackle tasks requiring multi-step, multi-tool workflows—ideal for debugging, reporting, or compliance checks.

- Streamlined collaboration: Integrate these models into API platforms like Apidog for smarter QA, faster prototyping, and improved team alignment.

Deep Dive: Integrated Tool Use and Multimodal Reasoning

Agentic Tool Chaining in Practice

Previous models could only call tools one at a time. Now, o3 and o4-mini can strategically select and combine tools in a single, coherent sequence. For example:

- API Documentation: Upload API specs, extract endpoints, cross-check with current online documentation, and generate visual diagrams—automatically.

- Data Validation: Analyze uploaded CSVs, compare results to updated standards online, and summarize discrepancies.

- Incident Analysis: Review log files, extract error patterns, correlate with the latest known issues, and suggest remediation—all in one request.

This level of integration enables developers and teams to automate more of their daily tasks and reduce manual handoffs.

“Thinking with Images”: From Perception to Action

A standout feature is the ability to use images as part of the reasoning process—not just for perception but for deep analysis and decision-making.

Practical Examples:

- Visual API Schema Review: Upload OpenAPI diagrams and get actionable feedback or transformation suggestions.

- Code Walkthroughs: Submit screenshots of code or logs and receive step-by-step debugging instructions.

- Engineering Problem Solving: Use diagrams or schematics as inputs for technical explanations or design recommendations.

This multimodal reasoning approach grounds AI outputs in real-world data, improving accuracy for tasks involving diagrams, data visualizations, and complex scenes.

OpenAI o3 vs o4-mini: Key Differences for Developers

[ ]

]

OpenAI o3: Maximum Performance

[ ]

]

- Strengths:

- Advanced code generation and debugging (multi-language)

- Solves complex math and science problems

- Excels at interpreting images, diagrams, and charts

- Use Cases: Ideal for advanced research, enterprise QA, and technical tasks where performance and deep reasoning are critical.

OpenAI o4-mini: Efficiency and Scale

- Strengths:

- Strong performance in math, coding, and vision

- Faster response times and much lower operational costs

- Use Cases: Suited for high-volume API endpoints, interactive applications, and projects needing scalability without sacrificing intelligence.

Benchmark Results: How Do o3 and o4-mini Perform?

[ ]

]

OpenAI’s benchmarks show o3 leads across major tasks, including:

- General Knowledge & Reasoning: Sets new highs on MMLU, HellaSwag

- Graduate-Level Q&A: Excels on GPQA

- Mathematics: Top scores on MATH, GSM8K

- Coding: Superior on HumanEval, MBPP

- Vision: Leading on MathVista, MMMU thanks to “thinking with images”

o4-mini also performs above previous models like GPT-4 Turbo (o1), especially considering its lower cost and faster speed. For most API automation, regression testing, or bulk documentation tasks, o4-mini offers exceptional efficiency.

[ ]

]

OpenAI o3-high vs o4-mini-high vs Google Gemini 2.5 Pro Benchmarks

Context Window: Handling Large-Scale API Docs and Logs

A major advantage for API-focused teams is the expanded context window:

- Input Context: Up to 200,000 tokens—process large API specs, codebases, or transcripts in one go.

- Output Tokens: Up to 100,000 tokens per response—generate comprehensive documentation, code snippets, or detailed reports without truncation.

This enables end-to-end API workflows (e.g., uploading a full OpenAPI file, generating test cases, or summarizing results) in a single request.

API Pricing: o3 vs o4-mini Cost Breakdown

[ ]

]

o3 API Pricing

- Input: $10.00 / 1M tokens

- Cached Input: $2.50 / 1M tokens

- Output: $40.00 / 1M tokens

Premium pricing reflects o3’s advanced capabilities—best for teams where accuracy and reasoning depth are paramount.

o4-mini API Pricing

- Input: $1.10 / 1M tokens

- Cached Input: $0.275 / 1M tokens

- Output: $4.40 / 1M tokens

o4-mini is nearly 10x more cost-effective, making it the go-to for high-traffic, scalable applications or continuous API monitoring.

Where Can You Use o3 and o4-mini?

OpenAI is rapidly rolling out these models across platforms:

- ChatGPT Plus, Pro, Team: Immediate access to o3, o4-mini, and o4-mini-high

- Enterprise and Edu: Access within a week of launch

- API Developers: Available via Chat Completions API and Responses API, supporting enhanced tool use

- Third-Party Integrations: Now available in GitHub Copilot, GitHub Models, and the Cursor code editor

💡 Apidog supports rapid adoption of new AI models, allowing teams to automate API documentation, testing, and collaboration workflows with the latest OpenAI capabilities—while keeping productivity and cost efficiency at the forefront.

Conclusion: Smarter AI Tools for API Teams

OpenAI’s o3 and o4-mini models represent a leap forward for developers and engineering teams. With advanced reasoning, seamless tool integration, and multimodal capabilities, they enable new levels of automation, insight, and productivity. Whether you need the raw power of o3 or the efficiency of o4-mini, both models are set to drive the next generation of intelligent API solutions.

Explore how integrating these models with platforms like Apidog can streamline your API development, testing, and documentation pipelines—making your team more agile and your workflows smarter.

💡 Ready to boost your API workflow? Generate beautiful documentation, enable team productivity, and replace Postman affordably with Apidog.