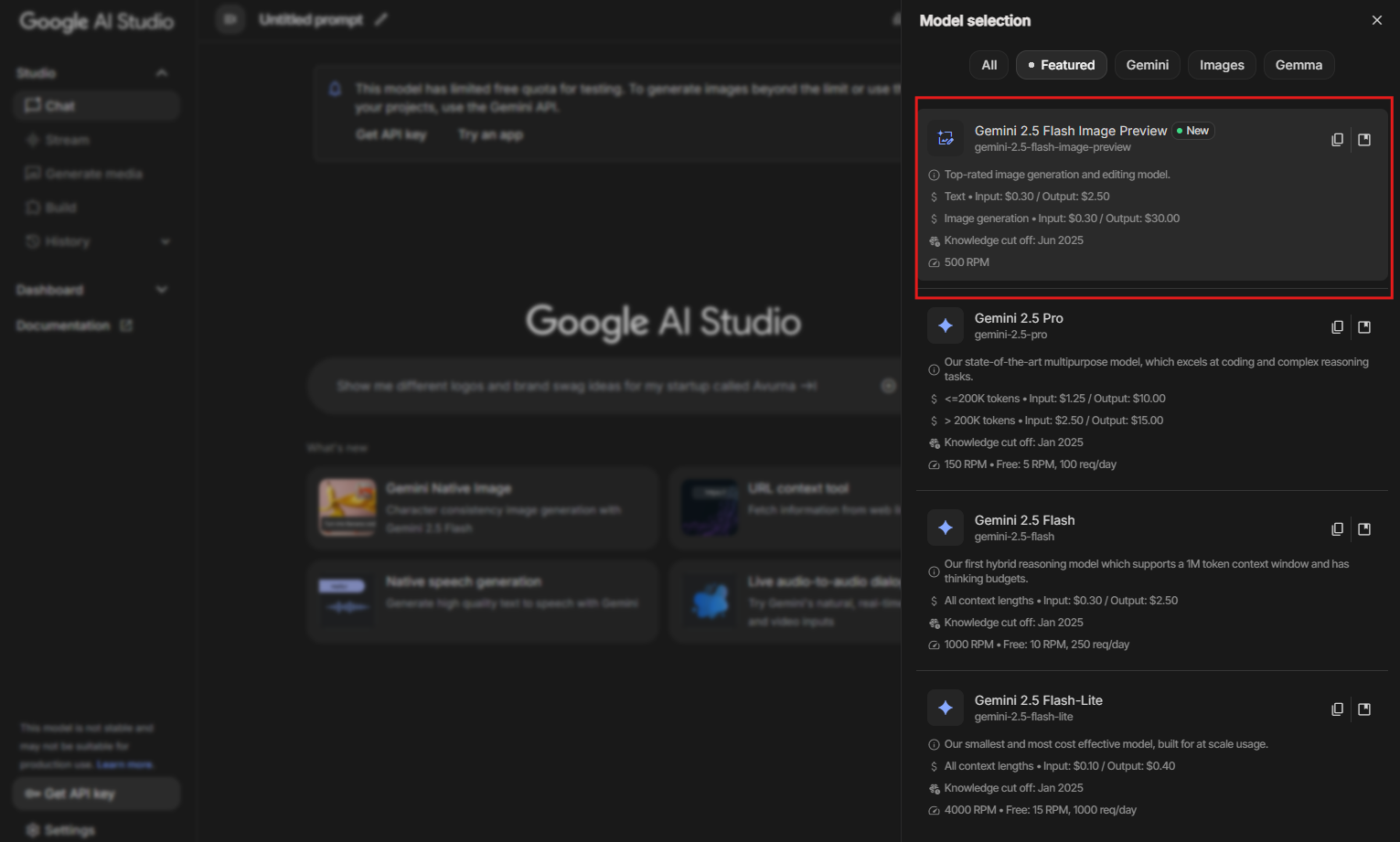

Google recently unveiled Nano Banana, a breakthrough in AI-driven image editing that sets new standards for consistency and creativity. This feature, officially known as Gemini 2.5 Flash Image Preview, enables users to generate and edit images with remarkable precision, maintaining subject likeness across multiple modifications. Engineers and developers now access this capability through the Gemini API, allowing integration into custom applications for tasks ranging from simple photo enhancements to complex scene compositions.

As AI models evolve, tools like Nano Banana empower creators to push boundaries in digital imagery. This article guides you through the technical aspects of using Nano Banana via API, from initial setup to advanced techniques. Developers harness this model to build applications that transform text prompts into visually coherent edits, and the following sections detail each step.

Understanding Nano Banana and Gemini 2.5 Flash Image Preview

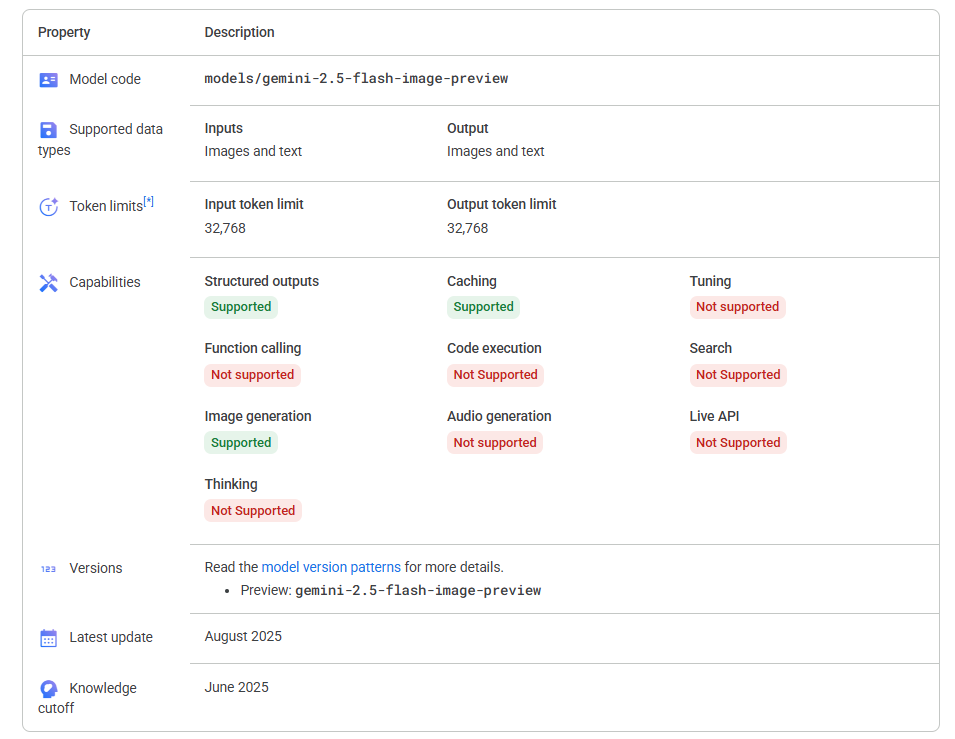

Nano Banana represents Google's latest advancement in multimodal AI, specifically tailored for image generation and editing. The term "Nano Banana" serves as a playful moniker for the Gemini 2.5 Flash Image model, highlighting its efficient, lightweight design that delivers high-fidelity results without excessive computational demands. Unlike traditional image editors, this model excels in maintaining character consistency—ensuring that faces, poses, and details remain true to the original subject even after extensive changes.

Moreover, Gemini-2-5-flash-image-preview integrates reasoning capabilities, allowing the model to "think" through edits before applying them. This results in outputs that avoid common pitfalls like distorted features or mismatched lighting. For instance, you instruct the model to change a person's outfit from casual to formal, and it preserves facial expressions and body proportions seamlessly.

The model's architecture builds on previous Gemini iterations, incorporating enhancements in vision-language processing. It supports inputs like text prompts combined with images, enabling multi-turn interactions where you refine edits iteratively. Google positions Nano Banana as a leader in image editing benchmarks, outperforming competitors in consistency and quality.

In addition, the model includes built-in safeguards, such as visible and invisible watermarks (SynthID) to denote AI-generated content. This promotes ethical use, particularly in professional settings where authenticity matters. Developers adopt Nano Banana for applications in e-commerce, design, and content creation, where rapid prototyping of visuals accelerates workflows.

Prerequisites for Using the Nano Banana API

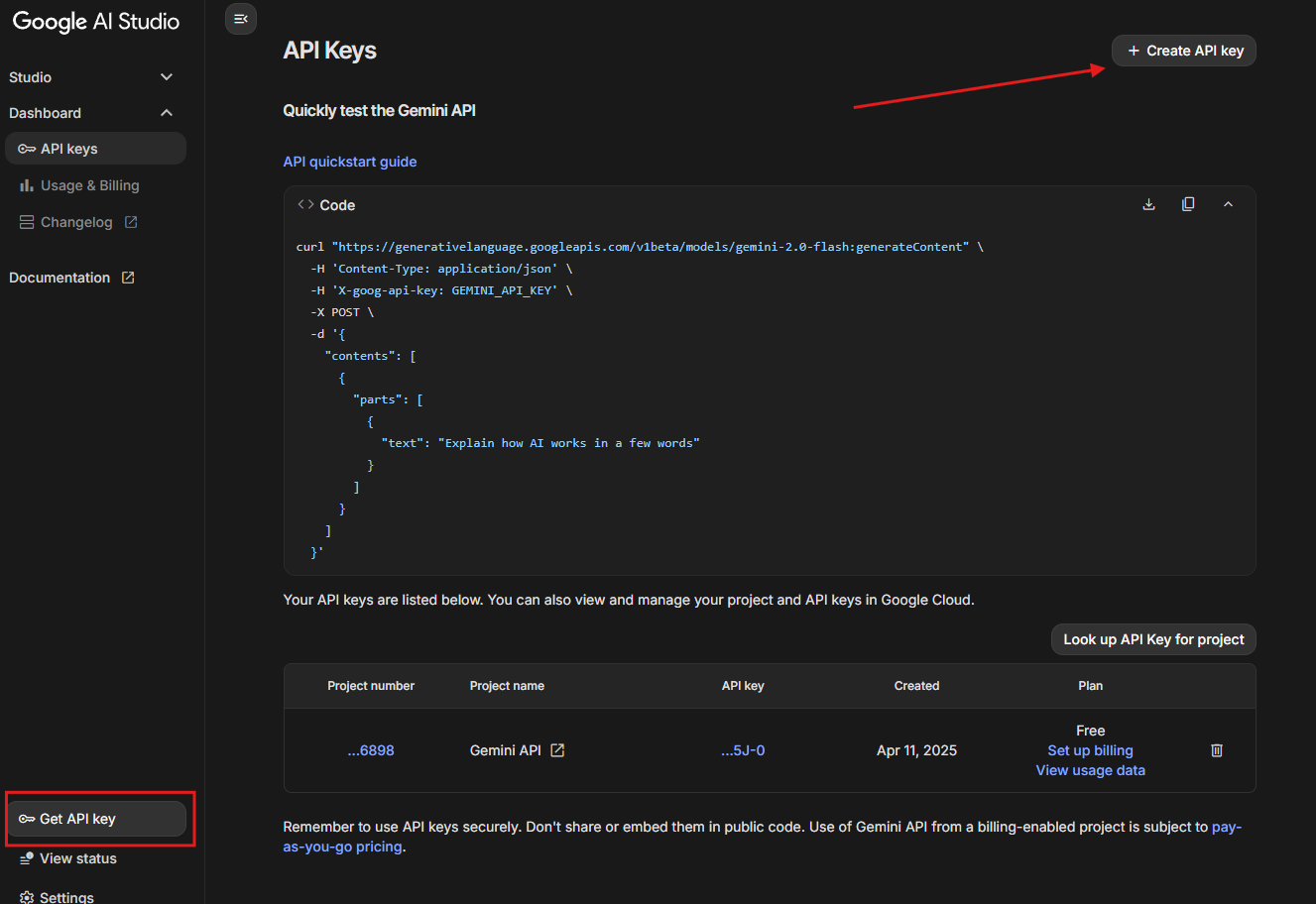

Before you implement Nano Banana, ensure your setup meets essential requirements. First, obtain a Google Cloud account, as the Gemini API operates through Vertex AI or Google AI Studio. This platform provides access to Gemini-2-5-flash-image-preview, along with quota management for API calls.

Next, verify programming language support. The API accommodates Python, JavaScript, Java, Go, and REST, but Python remains the most straightforward for beginners due to its extensive libraries. Install the Google Generative AI SDK via pip: pip install google-generativeai.

Additionally, prepare your environment with an API key. Navigate to Google AI Studio, and generate a key restricted to Gemini services.

Security best practices dictate using environment variables to store this key, preventing exposure in code repositories.

Furthermore, familiarize yourself with image formats. Nano Banana accepts JPEG, PNG, and base64-encoded images as inputs, with outputs in similar formats. Ensure your system handles file I/O efficiently, especially for batch processing.

Finally, review usage limits. Free tiers offer limited requests per minute, while paid plans scale for production. Monitor these to avoid throttling during development.

Setting Up Your Development Environment for Gemini-2-5-Flash-Image-Preview

Engineers configure their environments methodically to integrate Nano Banana effectively. Begin by cloning a starter repository if available, such as Google's quickstart for image editing. This provides boilerplate code for authentication and basic calls.

Then, import necessary modules. In Python, use import google.generativeai as genai and configure with genai.configure(api_key=os.getenv('API_KEY')). This step authenticates your session.

Moreover, select the model explicitly: model = genai.GenerativeModel('gemini-2.5-flash-image-preview'). This targets the Nano Banana variant optimized for images.

To enhance testing, incorporate Apidog. Download and install it from the official site, then create a new project for Gemini API endpoints. Apidog allows you to mock requests, inspect headers, and simulate errors, which proves invaluable when debugging Nano Banana interactions.

In practice, set up a virtual environment using venv to isolate dependencies. This prevents conflicts with other projects and maintains reproducibility.

Obtaining API Access to Nano Banana

Google streamlines API access for developers. Start in Google AI Studio, where you experiment with Gemini-2-5-flash-image-preview in a no-code interface before transitioning to code.

Once ready, enable the Vertex AI API in your Google Cloud console. Assign roles like "Vertex AI User" to your service account for secure access.

Additionally, handle billing. While initial trials are free, enable billing for sustained use. Google offers credits for new users, easing the entry barrier.

For enterprise setups, consider Vertex AI's managed endpoints, which scale Nano Banana for high-throughput applications.

Basic API Calls for Image Generation with Gemini-2-5-Flash-Image-Preview

Developers initiate image generation with simple prompts. Construct a request: response = model.generate_content(["Generate an image of a nano banana in a futuristic setting."]). The model processes text and returns base64-encoded images.

Next, decode and save the output: import base64; with open('output.png', 'wb') as f: f.write(base64.b64decode(response.parts[0].inline_data.data)).

Furthermore, incorporate safety settings to filter inappropriate content: safety_settings = [{'category': 'HARM_CATEGORY_HATE_SPEECH', 'threshold': 'BLOCK_MEDIUM_AND_ABOVE'}].

Test these calls in Apidog by setting the endpoint to https://generativelanguage.googleapis.com/v1beta/models/gemini-2.5-flash-image-preview:generateContent and adding your API key in headers.

Advanced Image Editing Techniques Using Nano Banana

Nano Banana shines in editing scenarios. Upload an image and prompt: response = model.generate_content([{'inline_data': {'mime_type': 'image/png', 'data': base64.b64encode(open('input.png', 'rb').read()).decode()}}, "Change the background to a beach."]).

Moreover, enable multi-turn editing by maintaining conversation history: Use chat = model.start_chat(history=[previous_response]) for iterative refinements.

Blend images: Provide multiple inputs and instruct blending, such as merging a portrait with a landscape.

Apply styles: Prompt "Apply the texture of banana peels to this object," leveraging Nano Banana's creative controls.

Incorporate video generation by editing frames sequentially, though this requires custom scripting.

Integrating Apidog for Efficient API Testing

Apidog enhances your Nano Banana workflow. Create collections for Gemini endpoints, parameterize prompts, and run automated tests.

For example, script a test case in Apidog to validate image editing responses, checking for SynthID watermarks.

This integration reduces development time, as Apidog visualizes JSON responses and handles authentication seamlessly.

Code Examples in Python for Gemini-2-5-Flash-Image-Preview

Here, a full script demonstrates editing:

import os

import base64

import google.generativeai as genai

genai.configure(api_key=os.getenv('GEMINI_API_KEY'))

model = genai.GenerativeModel('gemini-2.5-flash-image-preview')

with open('banana.jpg', 'rb') as img_file:

img_data = base64.b64encode(img_file.read()).decode()

prompt = "Edit this banana image to make it nano-sized in a lab setting."

response = model.generate_content([{'inline_data': {'mime_type': 'image/jpeg', 'data': img_data}}, prompt])

generated_img = base64.b64decode(response.parts[0].inline_data.data)

with open('edited_nano_banana.png', 'wb') as out:

out.write(generated_img)

This code uploads a banana image, applies the edit, and saves the result.

Extend it for batch processing: Loop over a list of images and prompts.

Handle errors gracefully with try-except blocks for quota exceeds or invalid inputs.

Best Practices and Limitations of Nano Banana API

Adopt rate limiting in your code to comply with API quotas. Cache responses for repeated queries to optimize costs.

Additionally, validate inputs: Ensure images under size limits (typically 4MB) and prompts concise for better results.

Limitations include occasional inconsistencies in complex scenes and regional availability restrictions. Nano Banana performs best with clear, descriptive prompts.

Monitor updates via Google DeepMind's channels, as models like Gemini-2-5-flash-image-preview evolve rapidly.

Conclusion

Nano Banana, through the Gemini 2.5 Flash Image Preview API, revolutionizes image editing for developers. By following this guide, you implement robust solutions that leverage its strengths in consistency and creativity. Remember, tools like Apidog amplify your efficiency—download it today to elevate your API interactions.

As you experiment, small adjustments in prompts yield significant improvements in outputs. Continue exploring to unlock Nano Banana's full potential in your projects.