Developers constantly seek efficient tools that accelerate coding workflows while maintaining high accuracy. xAI introduces the Grok Code Fast 1 API, a specialized model designed for agentic coding tasks. This API stands out by providing visible reasoning traces in responses, which enable users to guide and refine outputs effectively. As a result, programmers achieve faster iterations in complex projects.

Furthermore, the Grok Code Fast 1 API integrates seamlessly with modern development environments, supporting large context windows and economical pricing. Engineers leverage it for tasks ranging from code generation to debugging.

Transitioning from basic concepts, this guide equips you with practical knowledge to access and utilize the API. You start by understanding its core features and then progress to implementation details.

Understanding the Grok Code Fast 1 API

xAI engineers the Grok Code Fast 1 API as a reasoning model optimized for speed and cost-efficiency. Specifically, the model excels in agentic coding, where it autonomously handles tasks like writing functions, optimizing algorithms, and integrating systems. Unlike general-purpose models, it focuses on providing traceable reasoning, which means responses include step-by-step logic that users inspect and adjust.

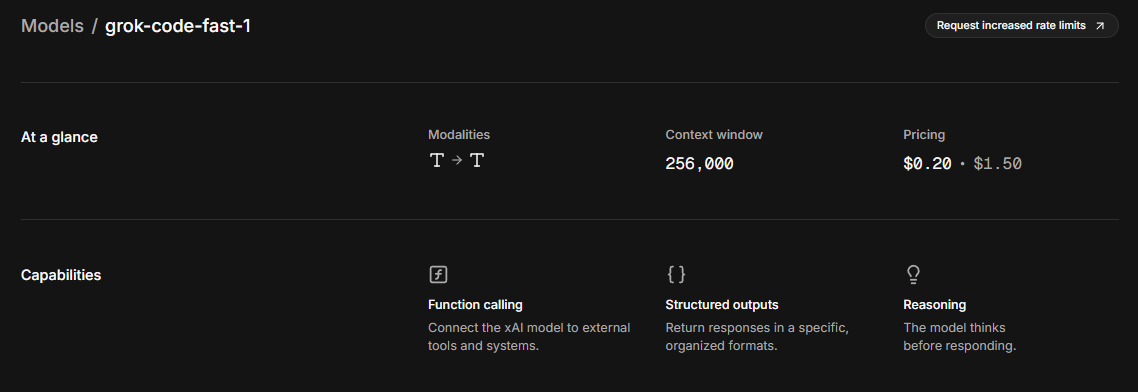

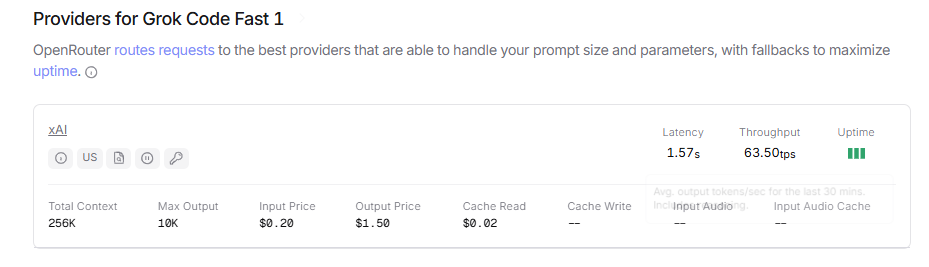

Moreover, the API supports a context window of 256,000 tokens. This capacity allows developers to input extensive codebases or documentation without truncation issues. The model operates in the us-east-1 region, ensuring low-latency responses for North American users. Capabilities include function calling, where the API connects to external tools, and structured outputs that format responses in JSON or other schemas for easy parsing.

However, it lacks live search functionality, so users supply all necessary data in prompts. Pricing remains competitive: input tokens cost $0.20 per million, output tokens $1.50 per million, and cached tokens $0.02 per million. Rate limits enforce 480 requests per minute and 2,000,000 tokens per minute, preventing abuse while accommodating high-volume usage.

Additionally, the Grok Code Fast 1 API builds on xAI's broader ecosystem, outperforming models like LLaMA in benchmarks such as HumanEval for code evaluation. Developers appreciate its economical nature, as cached inputs reduce costs significantly in iterative workflows. Moving forward, you prepare your setup to access these features directly.

Prerequisites for Accessing the Grok Code Fast 1 API

Before you initiate API calls, gather essential requirements. First, obtain an X account, as xAI ties authentication to this platform. Users log in via X credentials to generate keys securely.

Next, install Python 3.8 or higher, since the official SDK relies on it. Developers also need pip for package management. For REST-based access, prepare an OpenAI-compatible client library. Additionally, ensure your environment supports environment variables for storing sensitive keys.

Furthermore, familiarize yourself with gRPC basics, as the direct xAI API uses this protocol instead of traditional REST. This shift enhances performance but requires SDK usage. If you prefer REST, sign up for OpenRouter, which proxies requests compatibly.

Security considerations play a key role too. You configure Access Control Lists (ACLs) during key creation to limit permissions, such as sampler:write for text completion. Finally, verify your setup by running a simple command to confirm access. With these in place, you proceed to key generation confidently.

Generating Your xAI API Key for Grok Code Fast 1

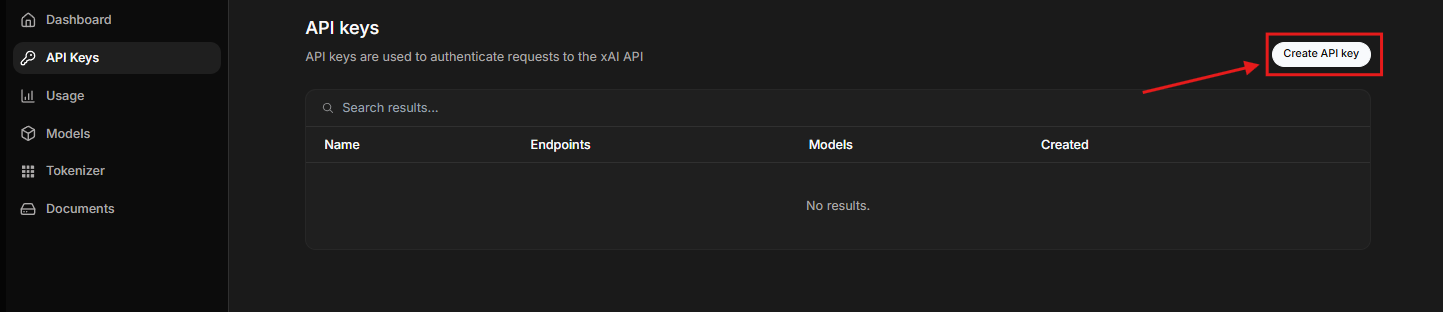

xAI streamlines key creation through its PromptIDE platform. You begin by navigating to ide.x.ai and logging in with your X account. This step authenticates your identity seamlessly.

Once inside, click the profile icon in the top-right corner. From the dropdown, select "API Keys." The interface displays existing keys or prompts you to create one. Click "Create API Key" to open a customization window.

Here, you define ACLs to control access. For Grok Code Fast 1 API usage, assign permissions like sampler:write for basic completions or broader scopes for advanced features. After setting these, save the key. The platform generates and displays it immediately—copy it securely, as it appears only once.

Moreover, you manage keys from this dashboard: edit permissions, delete obsolete ones, or regenerate if compromised. Store the key in an environment variable named XAI_API_KEY to avoid hardcoding in scripts. This practice enhances security across projects.

Transitioning to verification, run a Python command to test access: import xai_sdk; xai_sdk.does_it_work(). Success confirms your setup. Now, you install the SDK to start coding.

Installing and Configuring the xAI SDK

The xAI SDK provides the primary interface for direct API access. You install it via pip with a single command: pip install xai-sdk. This pulls the latest version, compatible with Python environments.

After installation, export your API key as an environment variable: export XAI_API_KEY=your_key_here. This step integrates the key without exposing it in code.

Furthermore, the SDK handles gRPC communications transparently. Developers import xai_sdk and instantiate a Client object. For instance, client = xai_sdk.Client() initializes the connection.

To ensure functionality, execute the verification script. If issues arise, check your ACLs or network settings. The SDK supports asynchronous operations, ideal for high-throughput applications.

Additionally, explore the SDK documentation for model-specific parameters. For Grok Code Fast 1, specify the model name "grok-code-fast-1" in requests. With configuration complete, you craft your first call.

Making Your First API Call with Grok Code Fast 1

You construct basic requests using the SDK's sampler module. Start with a simple text completion example to test the waters.

Import necessary modules: import asyncio and import xai_sdk. Define an async function for the main logic. Inside, create a client and set a prompt, such as "Write a Python function to calculate Fibonacci numbers."

Then, iterate over the response: async for token in client.sampler.sample(prompt, max_len=100, model="grok-code-fast-1"): print(token.token_str, end=""). This streams tokens, displaying reasoning traces inline.

Run the function with asyncio.run(main()). The API responds quickly, leveraging its speed for agentic tasks. Observe how it reasons step-by-step before outputting code.

However, adjust parameters like temperature for creativity or top_p for diversity. Higher values yield varied responses, while lower ones ensure determinism. Cache prompts for repeated calls to cut costs.

For synchronous needs, use blocking calls if available. This initial success paves the way for complex integrations.

Accessing Grok Code Fast 1 API via OpenRouter for REST Compatibility

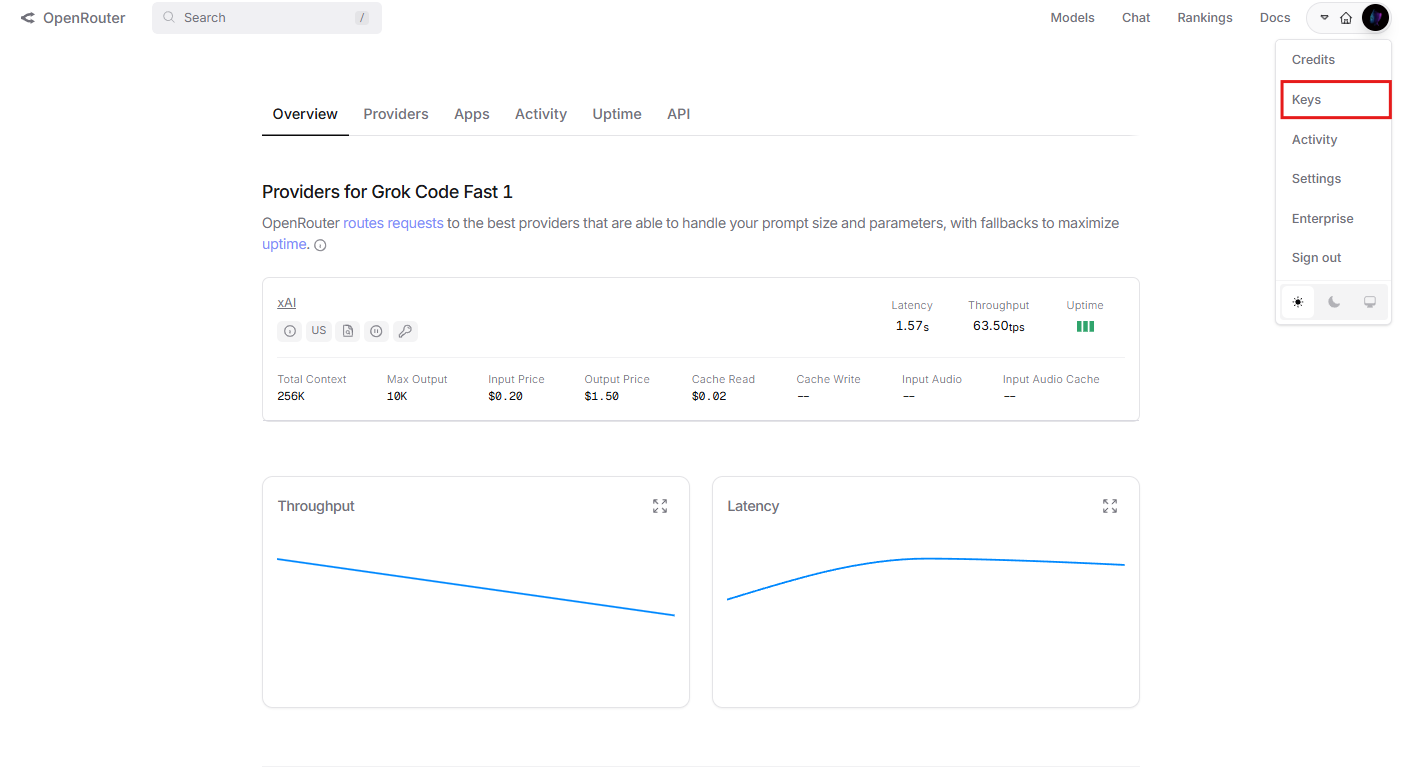

If gRPC poses challenges, OpenRouter offers a REST alternative. You sign up at openrouter.ai and obtain an API key there.

Use the OpenAI SDK for compatibility: from openai import OpenAI. Set the base_url to "https://openrouter.ai/api/v1" and api_key to your OpenRouter key.

Create a completion: client.chat.completions.create(model="x-ai/grok-code-fast-1", messages=[{"role": "user", "content": "Generate a sorting algorithm"}]). Print the response content.

This method normalizes parameters across providers. Add headers like HTTP-Referer for tracking. Pricing aligns with xAI's, but OpenRouter adds no extra fees.

Furthermore, it supports up to 256,000 context, matching direct access. Developers prefer this for web apps or non-Python environments. Transition to advanced features next.

Advanced Usage: Function Calling and Structured Outputs

The Grok Code Fast 1 API shines in function calling. You define tools in requests, allowing the model to invoke external functions.

Specify tools as a list of dictionaries with name, description, and parameters. The API decides invocation based on reasoning.

For structured outputs, request JSON mode. Set response_format to {"type": "json_object"}. This ensures parsable responses for data extraction.

Moreover, combine these for agentic workflows: the model reasons, calls tools, and structures results. Examples include integrating with databases or APIs.

Handle errors by validating tool outputs. Rate limits apply, so batch requests efficiently. These capabilities elevate the API beyond basic completion.

Integrating Apidog for Efficient Grok Code Fast 1 API Management

Apidog enhances your experience with the Grok Code Fast 1 API. Download and install Apidog to create projects for API testing.

For OpenRouter's REST endpoint, add a new request in Apidog. Set the method to POST, URL to https://openrouter.ai/api/v1/chat/completions, and headers with Authorization: Bearer your_key.

Define body with model "x-ai/grok-code-fast-1" and messages. Send and inspect responses, including reasoning traces.

Furthermore, Apidog generates documentation automatically. Share specs with teams for collaboration.

Use its automation for testing: script assertions to verify output structure. For SDK-based access, mock gRPC if needed, though Apidog excels in REST.

This integration saves time, as Apidog handles authentication states and visualizes workflows. Developers automate regressions, ensuring reliable API usage.

Best Practices for Optimizing Grok Code Fast 1 API Performance

Optimize prompts with clear instructions. Include examples to guide reasoning.

Leverage caching: reuse prefixes for similar queries, reducing token costs.

Monitor usage via xAI dashboards to stay within limits. Scale by distributing calls across keys if necessary.

Additionally, fine-tune parameters: lower max_tokens for quick responses. Test iteratively, refining based on traces.

Secure keys with environment variables or vaults. Avoid over-reliance on one model; hybridize with others for robustness.

These practices maximize efficiency, turning small adjustments into significant gains.

Troubleshooting Common Issues with Grok Code Fast 1 API

Encounter authentication errors? Verify ACLs and key validity.

If responses truncate, increase max_len or check context limits.

Rate limit exceeded? Implement exponential backoff in code.

For SDK issues, update pip packages. Debug gRPC with logging enabled.

Moreover, if reasoning traces confuse, prompt for simpler explanations. Community forums on X provide additional support.

Address these proactively to maintain smooth operations.

Pricing, Rate Limits, and Scalability Considerations

xAI structures pricing transparently. You pay per token, with caching offering savings.

Rate limits protect the service: adhere to 480 RPM and 2M TPM.

For scalability, use asynchronous calls and monitor metrics. Enterprise users explore custom plans via x.ai/api.

This model suits startups and large teams alike, balancing cost and performance.

Conclusion: Unlocking Potential with Grok Code Fast 1 API

You now possess the tools to access and harness the Grok Code Fast 1 API effectively. From key generation to advanced integrations, this guide covers essential steps. Implement these techniques to boost your coding productivity.

Remember, tools like Apidog amplify your capabilities—download it free today. As xAI evolves, stay updated via official docs. Start building innovative solutions now.