OpenAI advances artificial intelligence capabilities as it introduces gpt-realtime alongside significant enhancements to the Realtime API. This development targets developers who build interactive voice applications, offering direct speech-to-speech processing that captures nuances like tone and non-verbal cues. Engineers now access a model that processes audio inputs and generates responses with low latency, marking a shift in how AI handles real-time conversations.

Furthermore, this update aligns with the growing demand for multimodal AI systems. Developers integrate audio, text, and images seamlessly, expanding possibilities for applications in customer service, virtual assistants, and interactive entertainment. As we explore these advancements, consider how small refinements in API design lead to substantial improvements in user experience.

Understanding GPT-Realtime: The Core Model

OpenAI launches gpt-realtime as a specialized model designed for end-to-end speech-to-speech interactions. This model eliminates traditional pipelines that separate speech recognition, language processing, and text-to-speech synthesis. Instead, it handles everything in a unified framework, reducing latency and preserving the subtleties of human speech.

gpt-realtime excels in generating natural-sounding audio outputs. For instance, it responds to instructions like "speak quickly and professionally" or "adopt an empathetic tone with a French accent." Such fine-grained control empowers developers to tailor AI voices to specific scenarios, enhancing engagement in real-world applications.

Additionally, the model demonstrates superior intelligence in processing native audio inputs. It detects non-verbal elements, such as laughter or pauses, and adapts accordingly. If a user switches languages mid-sentence, gpt-realtime follows suit without disruption.

This capability stems from advanced training on diverse datasets, enabling it to score 30.5% on the MultiChallenge audio benchmark—a notable improvement from previous iterations.

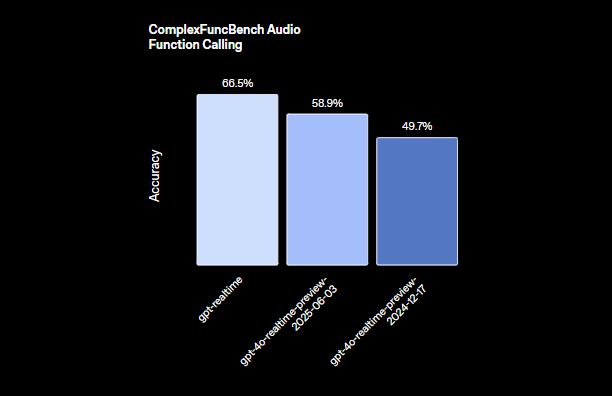

Engineers appreciate how gpt-realtime integrates function calling. With a score of 66.5% on the ComplexFuncBench, it executes tools asynchronously, ensuring conversations remain fluid even during extended computations. For example, while the AI processes a database query, it continues engaging the user with filler responses or updates.

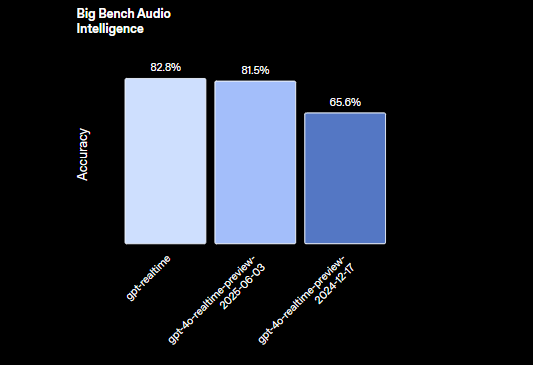

Moreover, gpt-realtime supports reasoning tasks with 82.8% accuracy on the Big Bench Audio evaluation. This allows it to handle complex queries that involve logical deduction directly from audio inputs, bypassing text conversion entirely.

OpenAI introduces two new voices, Marin and Cedar, exclusive to this model, alongside updates to eight existing voices for more expressive outputs. These enhancements ensure that AI interactions feel more human-like, bridging the gap between scripted responses and genuine dialogue.

Transitioning to practical implications, developers leverage gpt-realtime to build applications that respond in real time, such as live translation services or interactive storytelling tools. The model's efficiency minimizes computational overhead, making it suitable for deployment on edge devices or cloud infrastructures.

Key Features of the Realtime API

The Realtime API receives substantial upgrades, complementing gpt-realtime's capabilities. OpenAI equips it with features that facilitate production-ready voice agents, focusing on reliability, scalability, and integration ease.

First, remote MCP (Multi-Cloud Provider) server support stands out. Developers configure external servers for tool calls, such as integrating with Stripe for payments. This setup simplifies workflows by offloading specific functions to specialized services. You specify the server URL, authorization tokens, and approval requirements directly in the API session.

Next, image input functionality expands the API's multimodal scope. Applications add images, photos, or screenshots to ongoing sessions, enabling visually grounded conversations. For instance, a user uploads a diagram, and the AI describes it or answers questions about its content. This feature treats images as static elements, controlled by the application logic to maintain context.

Furthermore, SIP (Session Initiation Protocol) support connects the API to public phone networks, PBX systems, and desk phones. This bridges digital AI with traditional telephony, allowing voice agents to handle calls from landlines or mobiles seamlessly.

Reusable prompts represent another key addition. Developers save and reuse developer messages, tools, variables, and examples across multiple sessions. This promotes consistency and reduces setup time for recurring interactions, such as standard customer support scripts.

The API optimizes for low-latency interactions, ensuring high reliability in production environments. It processes multimodal inputs—audio and images—while maintaining session state, which prevents context loss in extended conversations.

In terms of audio handling, the Realtime API directly interfaces with gpt-realtime to generate expressive speech. It captures nuances that traditional systems often discard, leading to more engaging user experiences.

Developers also benefit from enterprise-grade features, including EU Data Residency for compliance and privacy commitments that safeguard sensitive data.

Shifting focus to performance metrics, these updates collectively enhance the API's utility. For example, asynchronous function calling prevents bottlenecks, allowing the AI to multitask without interrupting the flow.

How to Use the GPT-Realtime API: A Step-by-Step Guide

Developers integrate the gpt-realtime API through straightforward endpoints and configurations. Start by obtaining API keys from the OpenAI platform, ensuring your account supports the Realtime API.

To initiate a session, send a POST request to create a realtime client secret. Include session parameters like tools and types. For remote MCP integration, structure the payload as follows:

// POST /v1/realtime/client_secrets

{

"session": {

"type": "realtime",

"tools": [

{

"type": "mcp",

"server_label": "stripe",

"server_url": "https://mcp.stripe.com",

"authorization": "{access_token}",

"require_approval": "never"

}

]

}

}

This code sets up a tool for Stripe payments, where the API routes calls to the specified server without needing user approval each time.

Once the session starts, handle real-time interactions via WebSocket connections. Establish a WebSocket to the Realtime API endpoint, sending audio streams as binary data. The API processes inputs and returns audio outputs in real time.

For audio input, encode user speech and transmit it. gpt-realtime analyzes the audio, generating responses based on the session context. To incorporate images, use the conversation item creation event:

{

"type": "conversation.item.create",

"previous_item_id": null,

"item": {

"type": "message",

"role": "user",

"content": [

{

"type": "input_image",

"image_url": "data:image/png;base64,{base64_image_data}"

}

]

}

}

Replace {base64_image_data} with the actual base64-encoded image. This adds visual context, allowing the AI to reference it in responses.

Manage session state by setting token limits and truncating older turns to control costs. For long conversations, periodically clear unnecessary history while retaining key details.

To handle function calls, define tools in the session setup. When the AI invokes a function, the API executes it asynchronously, sending interim updates to keep the conversation alive.

For SIP integration, configure your application to route calls through compatible gateways. This involves setting up SIP trunks and linking them to the Realtime API sessions.

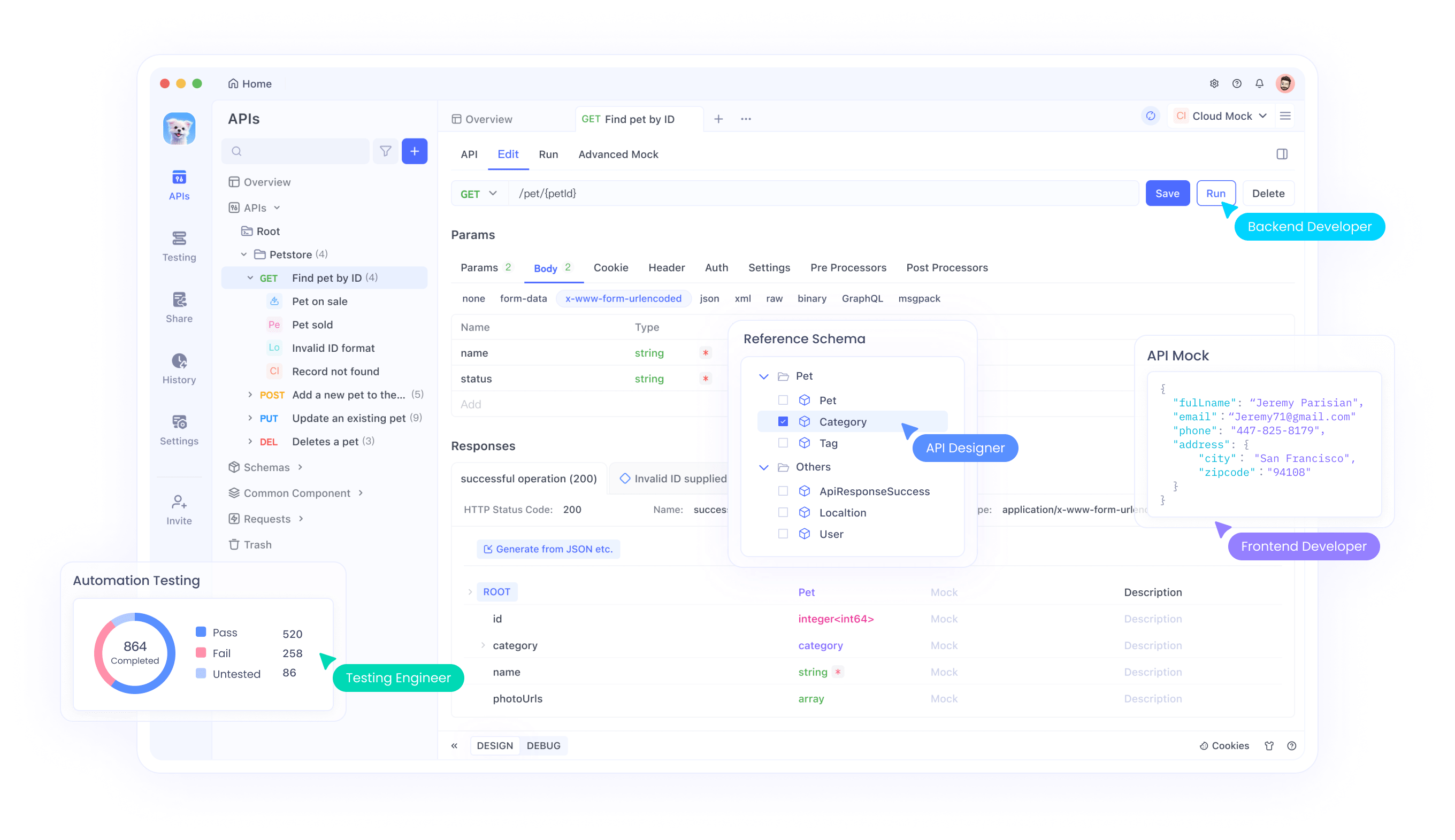

Testing these integrations proves crucial. Here, Apidog shines as an API management tool. It supports WebSocket testing, allowing you to simulate real-time audio exchanges and inspect responses. Download Apidog for free to mock endpoints, validate payloads, and ensure seamless connectivity with gpt-realtime.

In practice, build a simple voice agent by combining these elements. Capture microphone input, stream it to the API, and play back the generated audio. Libraries like WebSocket in JavaScript or Python's websockets module facilitate this.

Monitor latency by timing round-trip responses. OpenAI's optimizations ensure sub-second delays in most cases, but network conditions influence performance.

Handle errors gracefully, such as retrying failed connections or falling back to text-based interactions if audio processing encounters issues.

Extending this, incorporate reusable prompts. Store a prompt template with instructions like "Always respond empathetically" and apply it to new sessions via API parameters.

For advanced usage, combine gpt-realtime with other OpenAI models. Route complex reasoning to GPT-4o while using gpt-realtime for audio I/O, creating hybrid systems.

Security considerations include encrypting data in transit and managing access tokens securely. OpenAI's privacy commitments help, but implement additional safeguards for sensitive applications.

Integrating Apidog for Efficient API Management

Apidog emerges as a vital tool for developers working with the gpt-realtime API. This platform offers comprehensive API testing, documentation, and collaboration features, tailored for complex integrations like real-time WebSockets.

Engineers use Apidog to design API requests visually, import OpenAPI specifications, and run automated tests. For the Realtime API, simulate audio streams and verify multimodal inputs without writing extensive code.

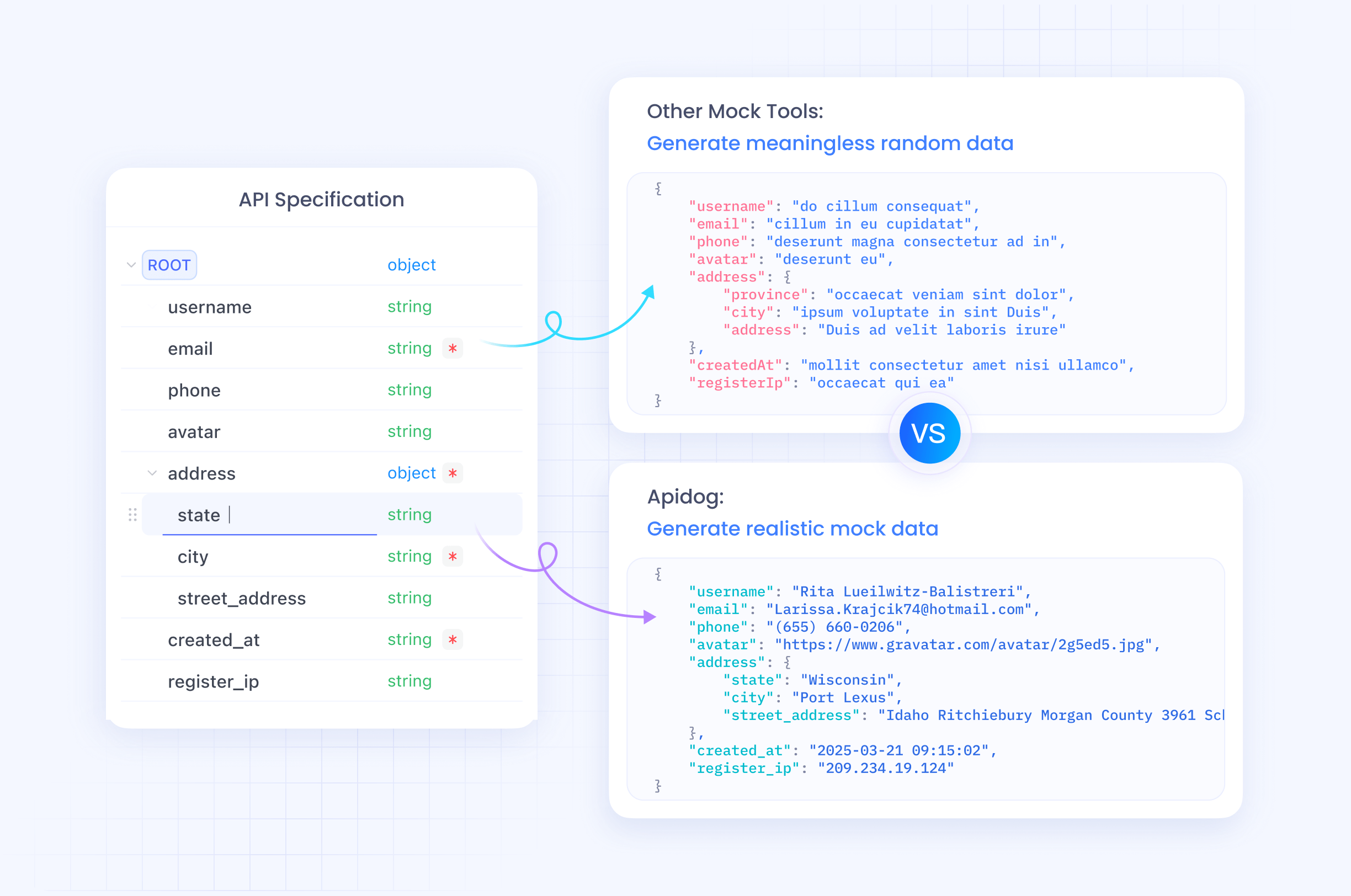

Moreover, Apidog's mocking capabilities allow prototyping before full implementation. Create mock servers that mimic gpt-realtime responses, accelerating development cycles.

The tool supports team collaboration, sharing test cases and environments. This proves invaluable for distributed teams building voice agents.

Since Apidog handles base64 encoding for images and binary data for audio, it simplifies debugging. Track request/response cycles in real time, identifying bottlenecks early.

Transitioning to deployment, use Apidog's monitoring to ensure API uptime and performance post-launch.

Pricing, Availability, and Future Implications

OpenAI prices gpt-realtime competitively, reducing costs by 20% from the preview version. Charge $32 per 1M audio input tokens ($0.40 for cached ones) and $64 per 1M output tokens. This structure encourages efficient usage, with controls to limit context and truncate sessions.

The API becomes available to all developers on August 28, 2025, with global access including EU regions.

Looking ahead, these advancements pave the way for ubiquitous voice AI. Industries like healthcare adopt it for patient interactions, while education uses it for interactive tutoring.

However, challenges remain, such as ensuring ethical use and mitigating biases in audio processing.

In summary, OpenAI's gpt-realtime and Realtime API redefine real-time AI, offering tools that developers harness for innovative applications. Small tweaks in integration yield significant gains, emphasizing precise implementation.